Camera over Ethernet (CoE)

Overview

Camera over Ethernet (CoE) is NVIDIA's next generation camera connectivity solution. It is fully supported in the Safe Image Processing Library (SIPL) framework which provides a unified API and driver model to support seamless bring up of Holoscan Sensor Bridge (HSB) based cameras.

CoE is designed as a new camera solution that replaces traditional MIPI CSI / GMSL links. It uses standard Ethernet (MGBE controller + IEEE 1722b) to transport raw image data instead of point to point serial interfaces.

MIPI and GMSL cameras, interfaced with HSB can also be supported through CoE architecture and SIPL framework.

Use cases:

- Industrial and robotics vision with long cable runs.

- Smart city and surveillance camera networks.

- Flexible, scalable multi-camera platforms.

Advantages:

- Scalability and modularity (multiple cameras on a shared network).

- Data integrity of Ethernet protocol.

- Easy integration with other Ethernet-based sensors (such as radar and lidar) for future development.

- Rapid prototyping and validation of new camera modules.

- Reduced cabling and cost using commodity infrastructure.

- Support for multi-cast and flexible topologies.

Table 1 shows a characteristics comparison between CoE and MIPI/GMSL CSI

| Characteristic | CoE | MIPI/GMSL |

|---|---|---|

| Cable Length | More than 100 m (Ethernet) |

GMSL: ~15 m MIPI CSI: less than 1 m |

| Topology | Star, daisy-chain | Point-to-point |

| Networking | Standard Ethernet | Proprietary |

| Hot-plug Support | Yes | Limited |

| Scalability | High | Moderate |

| Interoperability | High | Low |

| Cost | Lower (commodity) | Higher (custom) |

| Bandwidth | More than 1 Gbps | Up to 6 Gbps (GMSL) |

CoE also have some limitations:

- Latency might be greater than direct MIPI CSI.

- Requires Ethernet infrastructure and configuration.

- Some advanced camera features might require custom driver support.

Table 2 show the supported image pixel formats by CoE:

| Format | Description |

|---|---|

| RAW8 | 8 bits per pixel, sent as individual bytes. |

| RAW10 | 10 bits per pixel, packed (3 pixels in 4 bytes). |

| RAW12 | 12 bits per pixel, packed (2 pixels in 3 bytes). |

| RAW14 | 14 bits per pixel, sent in 2 bytes. |

| RAW16 | 16 bits per pixel, sent in 2 bytes. |

| RAW20 | Companded to fit in 2 bytes. |

| RAW24 | Sent in 3 bytes or companded |

| RAW28 | Companded to fit in 2 bytes. |

| RAW32 | Sent in 4 bytes or companded. |

Workflow

Think about CoE like an image path that starts with the camera. The camera is the eye that sees things and converts them into pixels in raw format; this element has different ways to connect to the Jetson Thor.

- Through cable Ethernet (Jetson Thor allow this, but you need to figure out if the camera has this connection type available.)

- Through MIPI connected to the HSB, and then the Ethernet output on the HSB goes directly to the Jetson Thor

The Holoscan Sensor Bridge (HSB): Works like a converter. The HSB is going to receive the MIPI pixels in raw format and then send that pixel information through Ethernet packets (via hololink) using the corresponding output port to the Jetson Thor.

But also the HSB allows us to configure the sensor because it allows us to send control commands to the sensor.

Let's assume that you have a camera that can connect directly to Ethernet, and you will need HSB just to configure the sensor using control commands.

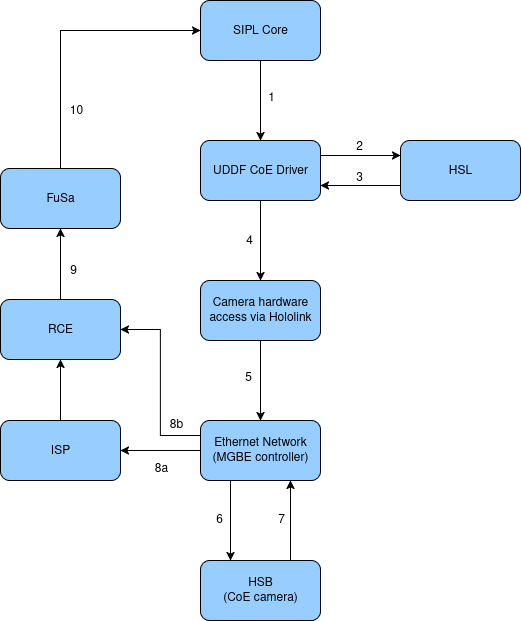

The CoE architecture consists of the following major components:

- Application

- Final user. Consume the images and do something like show it, record them, or object recognition

- SIPL Core and Pipeline:

- Provides APIs for SIPL applications to initialize camera pipelines.

- Manages capture streams and buffers (both raw and ISP-processed outputs).

- Reports events and errors via SIPL notifications.

- Camera Drivers (via UDDF/HSL)

- Implements camera bring-up and control within a standardized driver model.

- Discovers and configures HSB and CoE camera devices on the network.

- Camera Hardware Access (via Hololink drivers and Holoscan bridge library)

- Provides I2C tunneling through HSB to configure sensors and related module components.

- Ethernet Network/PHY (MGBE Controller)

- Receives and classifies Ethernet packets containing camera data and send it directly to the memory

- RCE Firmware

- Manages DMA channels, handles interrupts, and processes frame events. Basically, it is always pending that the images come healthy and without errors

Software Flow steps in CoE operation:

- Platform initialization

- Power up Ethernet (MGBE) Configure clocks, bring up Rx channels

- Application Startup (SIPL)

- Load camera config (JSON), initialize SIPL pipeline, and allocate capture buffers.

- RCE setup

- Configure DMA channels, interrupts, and classification rules.

- Network Configuration

- Set up Ethernet interface, optional MACsec, and enable frame reception.

- Device Discovery

- SIPL/UDDF discovers HSB bridges and camera sensors over Ethernet.

- Control path: I2C tunneling through HSB for sensor configuration.

- Capture & Processing

- Camera data streamed via Ethernet, delivered as raw or ISP-processed buffers to apps.

- Event Reporting

- SOF/EOF and status events reported back to SIPL apps.

Data and control path

The data path in CoE receives the raw image data from the camera sensor and delivers it to the SIPL applications, either after processing, along with the capture completion status and events, through RCE and FuSa Capture library.

The control path in CoE manages camera configuration, I2C tunneling, etc.

This path ensures that all configuration and control commands reach the camera sensor, EEPROM, and lens devices, if any, even when they are connected via an HSB.

*UDDF (Unified Device Driver Framework):

- Modular driver model for transport (Ethernet/HSB), sensors, EEPROM, and lenses.

- JSON-based configuration ensures consistency.

- HSL (Hardware Sequence Language):

- Scripts for I2C/GPIO sequences (sensor reset, power-on, sync, multi-sensor triggers).

- Enables flexible and advanced camera bring-up.

- Developers can build drivers once using UDDF/HSL and deploy across CoE-enabled cameras without rewriting transport logic.

For datailed information about HSL and UDDF, refert to HSL and UDF Overview

Camera and Transport Configuration

Devices are configured to be controlled through I2C bus transactions from the Jetson Platform via I2C tunneling through HSB bridge and Ethernet network.

| Camera Interface | How I2C works |

|---|---|

| HSB / CoE | I2C traffic is tunneled over Ethernet through the network (even across switches) to the HSB bridge, which forwards it to the sensor as I2C. Transparent to host software. |

| MIPI CSI | The I2C bus is directly connected to the Jetson SoC; the host software directly controls the sensor. |

| GMSL | I2C traffic is tunneled through the SerDes chips, and the host perceives it as a regular I2C bus even though it is carried inside the GMSL link. |

SIPL

SIPL is a framework for camera and sensor integration, image processing and control.

Support CoE architecture for camera sensor based on HSB.

In the hardware workflow by using SIPL we can edit:

- Application (NvSIPL or HSB)

- UDDF (Transport driver, camera sensor driver, sensor pyHSL)

- Hololink

Let's see the main components of SIPL framework:

- JSON configuration: we can describe the camera sensor and transport configuration using definitions like camera name, MAC and IP addresses, transport parameters, etc.

- SIPL Query API: methods for SIPL applications to configure or validate camera and transport configuration, Enables runtime discovery and selecion of camera modules. Basically loads the JSON configuration file and can decouple camera configuration from application code making it eady to add, remove or edit camera setups without recompiling the application or driver libraries.

- SIPL Control API: API methods for SIPL applications to control camera and transport

- SIPL Application structure: Use the Query and Control APIs to configure and control camera sensors. Check

sipl/samplesto review samples - Camera Sensor Driver Structure: Sensor drivers are implemented using UDDF libraries. All hardware acces is performed via HLS sequences and hololink drivers. CoE sensor drivers are provided with SIPL package, you can check

sipl/uddf/samples/directory to review driver samples. - Interfaces, classes and structures

- SIPL interfaces: check

sipl/include/ - UDDF interfaces and structures:

uddf/include/uddf/ - Camera structures:

sipl/include/NvSIPLCameraTypes.hpp

- SIPL interfaces: check

Json files in SIPL define transport, addresses and sensor/bridge parameters consistently. Let's see how to write SIPL Query JSON files for CoE.

In order to do this you can start using an example as template ( sipl/query/database/coe/ )

In the json file you going to find different sections but let's focus on these two big blocks:

- cameraConfigs describes the camera: sensor details, network properties, HSB mapping.

- transportSettings defines how Jetson communicates with the HSB (interface, IP, VLAN, HSB ID).

Table 1 describe each field inside the cameraConfigs block

| Field | Type | Description | Example |

|---|---|---|---|

| name | string | Unique name of the camera. | "IMX219_CoE" |

| type | string | Transport type. For Camera-over-Ethernet, must be "CoE". | "CoE" |

| platform | string | Platform/camera family name. Used internally by SIPL. | "IMX219" |

| platformConfig | string | Predefined platform configuration for Jetson Thor (optional). | "Thor_IMX219" |

| description | string | Free text description. Helpful if multiple cameras are connected. | "IMX219 over CoE" |

| sensorInfo | object | Describes the sensor (resolution, format, CFA, fps, etc.). | see subtable 1 |

| CoECamera | object | CoE-specific configuration (HSB + network settings). | see subtable 2 |

| isEEPROMSupported | bool | Indicates if the module has accessible EEPROM. Usually false for prototypes. | false |

| sensor | object | Sensor name. Must match driver naming. | { "name": "IMX219" } |

| cryptoConfigName | string | Crypto/security configuration. Often provided as a template per sensor. | "JETSON_AGX_THOR_DEVKIT_IMX219" |

| mac_address | string | MAC address of the HSB device. Must match hardware. | "8c:1f:64:6d:70:03" |

| ip_address | string | Static IP address of the HSB device. | "192.168.1.2" |

| hsb_id | int | 0 | ID of the HSB bridge. Each HSB must have a unique ID. |

Subtable 1 shows sensorInfo fields detailed for sensor output modes (RAW, CFA, resolution, fps)

| Field | Type | Description | Example |

|---|---|---|---|

| id | int | Unique identifier of the sensor in the system. Must be sequential (0,1,2…). | Example |

| name | string | Sensor name (must match the UDDF driver). | Example |

| description | string | Free text description. | Example |

| i2cAddress | string | Sensor I2C address, used in HSL/UDDF for configuration. | Example |

| virtualChannels[] | array | see subsubtable 1 | Defines supported streaming modes (resolution, format, fps, CFA). |

Subsubtable 1 shows virtualChannels detailed to define sensor streaming mode

| Field | Type | Description | Example |

|---|---|---|---|

| cfa | string | Color Filter Array (Bayer pattern: rggb, gbrg, grbg, bggr). | "rggb" |

| inputFormat | string | Sensor output format (RAW8, RAW10, RAW12, etc.). | "raw10packed" |

| width | int | Frame width in pixels. | 3280 |

| height | int | Frame height in pixels. | 2464 |

| fps | float | Frames per second. | 30.0 |

| embeddedTopLines | int | Number of metadata lines above the image frame. | 1 |

| embeddedBottomLines | int | Number of metadata lines below the frame. | 1 |

| isEmbeddedDataTypeEnabled | bool | If embedded lines should be interpreted as data. | false |

Subtable 2 shows the CoECamera fields for specific configuration to define how the sensor connects over Ethernet

| Field | Type | Description | Example |

|---|---|---|---|

| isStereo | bool | Marks if the camera is part of a stereo pair. | false |

| hsbSensorIndex | int | Index of the sensor within the same HSB (0 or 1). | 0 |

| hsb_id | int | HSB ID (must match transportSettings). | 0 |

| sensor | object | Defines MAC/IP of the sensor within the network. | see Subsubtable 2 |

Subsubtable 2 shows sensor fields to describe MAC/IP addresses

| Field | Type | Description | Example |

|---|---|---|---|

| mac_address | string | MAC address of the HSB device. | "8c:1f:64:6d:70:03" |

| ip_address | string | Static IP address of the HSB device. | "192.168.1.2" |

Now let's focus on the fields of transportSettings block

| Field | Type | Description | Example |

|---|---|---|---|

| name | string | Transport name. Must match the camera configuration. | "IMX219_Transport" |

| compatible_name | string | Transport driver type. For CoE always "HsbTransport". | "HsbTransport" |

| type | string | Transport type (Ethernet-based). | "CoE" |

| description | string | Free text description. | "Transport settings for IMX219" |

| if_name | string | Ethernet interface name on Jetson Thor. | "mgbe0_0" |

| ip_address | string | IP address of the HSB/camera device. | "192.168.1.2" |

| vlan_enable | bool | Enable VLAN tagging if required by network setup. Usually false. | false |

| hsb_id | int | ID of the HSB. Must match the one used in cameraConfigs. | 0 |

For further information about SIPL query json for CoE camera development, refer to SIPL Query Json Guide

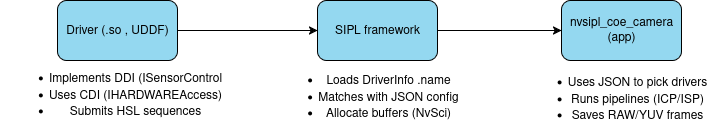

UDDF Drivers with SIPL

UDDF Drivers is basically what you code. You can create C++ drivers that implement DDI interfaces (ISensorControl, IReadWriteI2C) and expose them through GetInterface() .

The driver does not interact directly with the hardware, instead use CDI interface like IHardwareAccess and HSL sequences.

To integrate the driver using SIPL, you need to knwo that each driver defines a DriverInfo with .name this .name your camera driver.

- transportSettings.compatible_name -> your transport driver.

SIPL scans /usr/lib/nvsipl_uddf/ loads your .so, calls uddf_discover_drivers() and matches names with json, once matched, SIPL can call the driver's interfaces.

At this time the drivers becomes discoverable and usable by SIPL

By using nvsipl_coe_camera application we can test and use the driver, it shows:

- How SIPL loads your drivers via the JSON config.

- How SIPL configures pipelines:

- ICP = raw capture output.

- ISP0/ISP1/ISP2 = processed YUV outputs.

- How SIPL allocates buffers with NvSciBuf.

- How SIPL synchronizes with NvSciSync fences.

- How SIPL creates threads to consume image data (RAW, YUV).

- How you can run the app with:

nvsipl_coe_camera -t imx219.json -R -0 -W 5 -f test

At this level the application will be the consumer of the driver.

The next image shows a big picture of how this process is connected:

PyHSL is the toolcahin you use to create HSL sequences for your driver example: sensor init, star streaming, etc. These are commpiled into .hpp headers that your driver uses with SubmitSequence() .

Note: nvsipl_coe_camera indirectly executes these sequences when SIPL calls your driver.

What RidgeRun can offer

- Driver & BSP Customization: Develop and integrate UDDF/HSL drivers for new sensors over CoE.

- GStreamer Pipelines: Create low-latency pipelines for real-time video capture and processing.

- Vision Algorithms: Provide optimized CUDA/OpenCV plugins (stitching, stabilization, bird’s eye view).

- System Integration: Configure SIPL pipelines, JSON transport configs, and ensure seamless ISP integration.

- Rapid Prototyping → Production: RidgeRun accelerates camera bring-up, testing, and scaling multi-camera systems with CoE.