RidgeRun Linux Camera Drivers - Examples - RB5

RB5 Capture SubSystem

The Qualcomm Robotics RB5 Development Kit is a platform designed for robotics development, featuring the Qualcomm QRB5165 processor. Customized for a wide range of robotics applications, this kit supports extensive prototyping with its 96Boards open hardware specification and mezzanine-board expansions. With 15 TOPS of compute power, advanced AI capabilities, and support for multiple cameras, it's used for creating autonomous robots and drones.

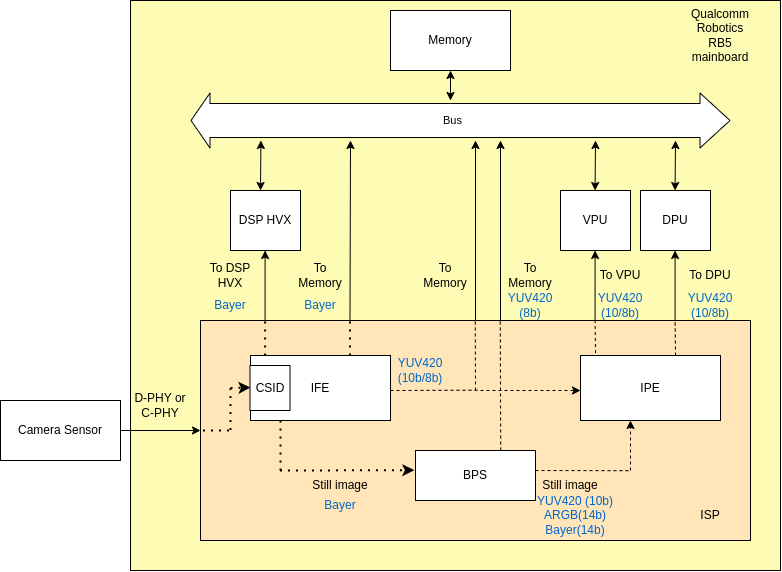

The next figure shows the basic hardware modules of the RB5 capture subsystem and their interconnections.

- Camera Sensor

Communicates with the mainboard via MIPI CSI-2 protocol interfaces using C-PHY or D-PHY layers.

- Image Front-End (IFE)

The ISP contains 2 Full IFEs for processing 25MP input resolution sensors and 5 IFE_lite for 2MP input resolution sensors. This modules provide Bayer processing for video/preview.

- Bayer Processing Segment (BPS)

Processes the image data from the IFE, handle Bayer processing and downscaling, also support multiple format for flexible hybrid of hardware and software.

- Image Processing Engine (IPE)

Further processes the image data, for example, image correction and adjustment, noise reduction, temporal filtering.

You can find more information on the hardware and software components of the capture subsystem in the following wiki.

OpenCV Examples

The following examples shows some basic Python code using OpenCV to capture from either a USB camera or a MIPI CSI camera. To use the code make sure you have OpenCV installed in your Jetson board, use this command to install it:

sudo apt-get install python3-opencv

After the installation was completed, simply run the command (camera_capture.py contains the code shown below):

python3 camera_capture.py

USB camera

The following Python code shows a basic example using OpenCV to capture from a USB camera:

import cv2

CAMERA_INDEX = 0

TOTAL_NUM_FRAMES = 100

FRAMERATE = 30.0

def is_camera_available(camera_index=0):

cap = cv2.VideoCapture(camera_index)

if not cap.isOpened():

return False

cap.release()

return True

def main():

if is_camera_available(CAMERA_INDEX):

print(f"Camera {CAMERA_INDEX} is available.")

else:

print(f"Camera {CAMERA_INDEX} is not available.")

exit()

cap = cv2.VideoCapture(CAMERA_INDEX)

if not cap.isOpened():

print("Error: Could not open video stream.")

exit()

print("Camera opened successfully.")

frame_width = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

frame_height = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

fourcc = cv2.VideoWriter_fourcc(*'mp4v')

out = cv2.VideoWriter('output.mp4', fourcc, FRAMERATE, (frame_width, frame_height))

num_frames = 0

while True:

ret, frame = cap.read()

if not ret:

print("Error: Failed to capture image.")

break

out.write(frame)

print(f"Recording: {num_frames} / {TOTAL_NUM_FRAMES}", end='\r')

if num_frames >= TOTAL_NUM_FRAMES:

print("\nCapture duration reached, stopping recording.")

break

num_frames += 1

# Release the camera and the VideoWriter

cap.release()

out.release()

if __name__ == '__main__':

main()

MIPI CSI camera

The following Python code shows a basic example using OpenCV to capture from a MIPI CSI camera:

import cv2

TOTAL_NUM_FRAMES = 100

FRAMERATE = 30.0

PIPELINE = "qtiqmmfsrc ! 'video/x-raw(memory:GBM),width=(int)1920,height=(int)1080,format=(string)NV12,framerate=(fraction)30/1' ! autovideoconvert ! 'video/x-raw,format=(string)BGRx' ! appsink"

def is_camera_available():

cap = cv2.VideoCapture(PIPELINE)

if not cap.isOpened():

return False

cap.release()

return True

def main():

if is_camera_available():

print("A camera is available.")

else:

print("No camera available.")

exit()

cap = cv2.VideoCapture(PIPELINE)

if not cap.isOpened():

print("Error: Could not open video stream.")

exit()

print("Camera opened successfully.")

frame_width = int(cap.get(cv2.CAP_PROP_FRAME_WIDTH))

frame_height = int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT))

fourcc = cv2.VideoWriter_fourcc(*'mp4v')

out = cv2.VideoWriter('output.mp4', fourcc, FRAMERATE, (frame_width, frame_height))

num_frames = 0

while True:

ret, frame = cap.read()

if not ret:

print("Error: Failed to capture image.")

break

out.write(frame)

print(f"Recording: {num_frames} / {TOTAL_NUM_FRAMES}", end='\r')

if num_frames >= TOTAL_NUM_FRAMES:

print("\nCapture duration reached, stopping recording.")

break

num_frames += 1

# Release the camera and the VideoWriter

cap.release()

out.release()

if __name__ == '__main__':

main()

GStreamer Examples

anonymous: Maybe just a short paragraph mentioning that for this pipelines to work an RB board with a MIPI camera connected is needed. (please remove this box when addressed) |

The following GStreamer pipelines demonstrate how to capture video from a camera attached to the board. Please note that these pipelines require a MIPI CSI camera to function properly.

1080p@30 xvimagesink

gst-launch-1.0 qtiqmmfsrc camera=0 ! "video/x-raw(memory:GBM),format=NV12,width=1920,height=1080,framerate=30/1" ! autovideoconvert ! xvimagesink sync=true

720p@60 xvimagesink

gst-launch-1.0 qtiqmmfsrc camera=0 ! "video/x-raw(memory:GBM),format=NV12,width=1280,height=720,framerate=60/1" ! autovideoconvert ! xvimagesink sync=true

1080p@30 h264

gst-launch-1.0 -e -v qtiqmmfsrc camera=0 ! "video/x-raw(memory:GBM),format=NV12,width=1920,height=1080,framerate=30/1,profile=high,level=(string)5.1" ! qtic2venc target-bitrate=8000000 ! h264parse ! queue ! mp4mux ! filesink location="video_capture_test_1080p.mp4"

720p@60 h264

gst-launch-1.0 -e -v qtiqmmfsrc camera=0 ! "video/x-raw(memory:GBM),format=NV12,width=1280,height=720,framerate=60/1,profile=high,level=(string)5.1" ! qtic2venc target-bitrate=8000000 ! h264parse ! queue ! mp4mux ! filesink location="video_capture_test_720p.mp4"

RidgeRun Product Use Cases