GstInference/Benchmarks: Difference between revisions

mNo edit summary |

|||

| Line 7: | Line 7: | ||

</noinclude> | </noinclude> | ||

= Benchmarks = | {{DISPLAYTITLE:GstInference Benchmarks|noerror}} | ||

== Benchmarks == | |||

The following benchmarks were run with a source video (1920x1080@60). With the following base gstremear pipeline, and environment variables: | The following benchmarks were run with a source video (1920x1080@60). With the following base gstremear pipeline, and environment variables: | ||

| Line 43: | Line 45: | ||

Where x is the power mode ID (e.g. 0, 1, 2, 3, 4, 5, 6). | Where x is the power mode ID (e.g. 0, 1, 2, 3, 4, 5, 6). | ||

== Summary == | === Summary === | ||

{| class="wikitable" style="display: inline-table;" | {| class="wikitable" style="display: inline-table;" | ||

| Line 142: | Line 144: | ||

|} | |} | ||

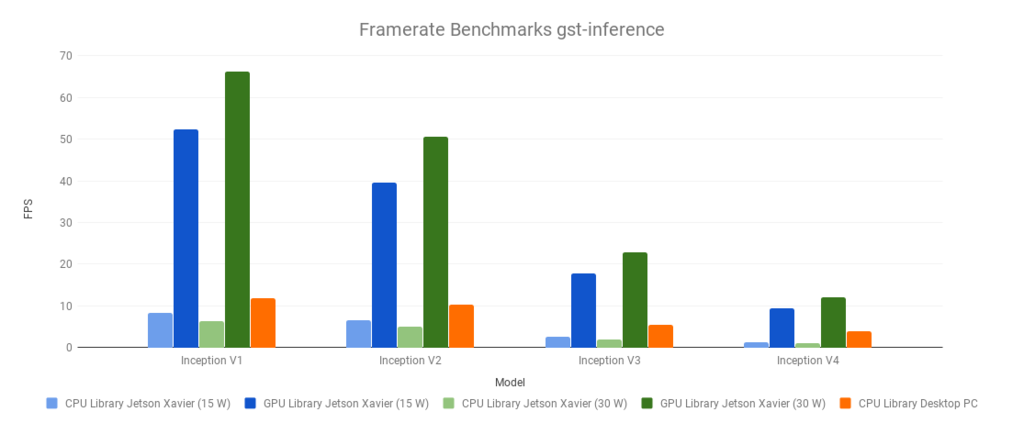

== Framerate == | === Framerate === | ||

[[File:Framerate Benchmarks gst-inference.png|1024px|frameless|thumb|center]] | [[File:Framerate Benchmarks gst-inference.png|1024px|frameless|thumb|center]] | ||

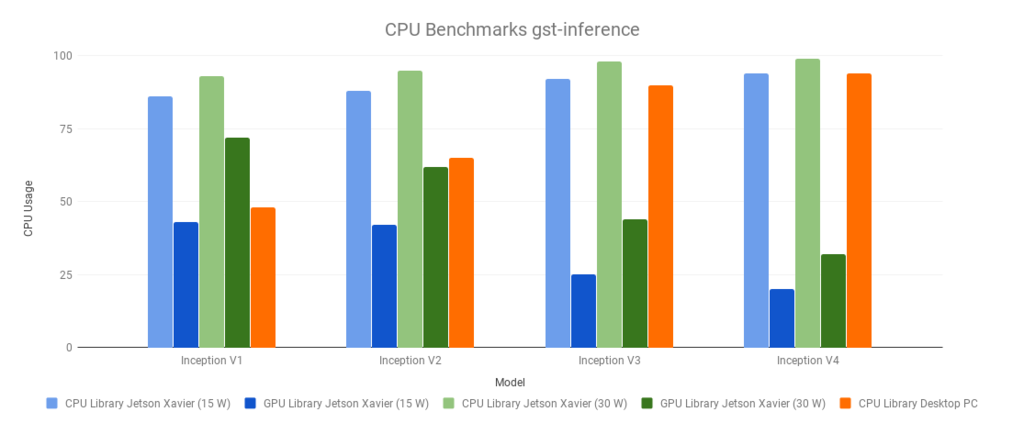

== CPU Usage == | === CPU Usage === | ||

[[File:CPU Benchmarks gst-inference.png|1024px|frameless|thumb|center]] | [[File:CPU Benchmarks gst-inference.png|1024px|frameless|thumb|center]] | ||

Revision as of 20:30, 1 February 2020

Make sure you also check GstInference's companion project: R2Inference |

| GstInference |

|---|

|

| Introduction |

| Getting started |

| Supported architectures |

|

InceptionV1 InceptionV3 YoloV2 AlexNet |

| Supported backends |

|

Caffe |

| Metadata and Signals |

| Overlay Elements |

| Utils Elements |

| Legacy pipelines |

| Example pipelines |

| Example applications |

| Benchmarks |

| Model Zoo |

| Project Status |

| Contact Us |

|

Make sure you also check GstInference's companion project: R2Inference |

| GstInference |

|---|

|

| Introduction |

| Getting started |

| Supported architectures |

|

InceptionV1 InceptionV3 YoloV2 AlexNet |

| Supported backends |

|

Caffe |

| Metadata and Signals |

| Overlay Elements |

| Utils Elements |

| Legacy pipelines |

| Example pipelines |

| Example applications |

| Benchmarks |

| Model Zoo |

| Project Status |

| Contact Us |

|

Benchmarks

The following benchmarks were run with a source video (1920x1080@60). With the following base gstremear pipeline, and environment variables:

$ VIDEO_FILE='video.mp4' $ MODEL_LOCATION='graph_inceptionv1_tensorflow.pb' $ INPUT_LAYER='input' $ OUTPUT_LAYER='InceptionV1/Logits/Predictions/Reshape_1'

The environment variables were changed accordingly with the used model (Inception V1,V2,V3 or V4)

$ GST_DEBUG=inception1:1 gst-launch-1.0 filesrc location=$VIDEO_FILE ! decodebin ! videoconvert ! videoscale ! queue ! net.sink_model inceptionv1 name=net model-location=$MODEL_LOCATION backend=tensorflow backend::input-layer=$INPUT_LAYER backend::output-layer=$OUTPUT_LAYER net.src_model ! perf ! fakesink -v

The Desktop PC had the following specifications:

- Intel(R) Core(TM) i7-3770 CPU @ 3.40GHz

- 8 GB RAM

- Cedar [Radeon HD 5000/6000/7350/8350 Series]

- Linux 4.15.0-54-generic x86_64 (Ubuntu 16.04)

The Jetson Xavier power modes used were 2 and 6 (more information: Supported Modes and Power Efficiency )

- View current power mode:

$ sudo /usr/sbin/nvpmodel -q

- Change current power mode:

sudo /usr/sbin/nvpmodel -m x

Where x is the power mode ID (e.g. 0, 1, 2, 3, 4, 5, 6).

Summary

| Desktop PC | CPU Library | |

|---|---|---|

| Model | Framerate | CPU Usage |

| Inception V1 | 11.89 | 48 |

| Inception V2 | 10.33 | 65 |

| Inception V3 | 5.41 | 90 |

| Inception V4 | 3.81 | 94 |

| Jetson Xavier (15W) | CPU Library | GPU Library | ||

|---|---|---|---|---|

| Model | Framerate | CPU Usage | Framerate | CPU Usage |

| Inception V1 | 8.24 | 86 | 52.3 | 43 |

| Inception V2 | 6.58 | 88 | 39.6 | 42 |

| Inception V3 | 2.54 | 92 | 17.8 | 25 |

| Inception V4 | 1.22 | 94 | 9.4 | 20 |

| Jetson Xavier (30W) | CPU Library | GPU Library | ||

|---|---|---|---|---|

| Model | Framerate | CPU Usage | Framerate | CPU Usage |

| Inception V1 | 6.41 | 93 | 66.27 | 72 |

| Inception V2 | 5.11 | 95 | 50.59 | 62 |

| Inception V3 | 1.96 | 98 | 22.95 | 44 |

| Inception V4 | 0.98 | 99 | 12.14 | 32 |

Framerate

CPU Usage