GstInference with TinyYoloV2 architecture

Make sure you also check GstInference's companion project: R2Inference |

| GstInference |

|---|

|

| Introduction |

| Getting started |

| Supported architectures |

|

InceptionV1 InceptionV3 YoloV2 AlexNet |

| Supported backends |

|

Caffe |

| Metadata and Signals |

| Overlay Elements |

| Utils Elements |

| Legacy pipelines |

| Example pipelines |

| Example applications |

| Benchmarks |

| Model Zoo |

| Project Status |

| Contact Us |

|

Description

TinyYOLO (also called tiny Darknet) is the light version of the YOLO(You Only Look Once) real-time object detection deep neural network. TinyYOLO is lighter and faster than YOLO while also outperforming other light model's accuracy. The following table presents a comparison between YOLO, Alexnet, SqueezeNet, and tinyYOLO.

| Model | Top-1 | Top-5 | Ops | Size |

|---|---|---|---|---|

| AlexNet | 57.0 | 80.3 | 2.27 Bn | 238 MB |

| Darknet | 61.1 | 83.0 | 0.81 Bn | 28 MB |

| SqueezeNet | 57.5 | 80.3 | 2.17 Bn | 4.8 MB |

| Tiny Darknet | 58.7 | 81.7 | 0.98 Bn | 4.0 MB |

TinyYOLO results are also very similar to YOLO's. The following image compares both results:

Architecture

The YOLO detection network has 24 convolutional layers followed by 2 fully connected layers. It uses alternating 1×1 convolutional layers to reduce the feature space between layers. The convolutional layers are pretrained on the ImageNet classification task at half the resolution (224 × 224 input image) and then the resolution is doubled for the detection training.

Tiny YOLO operates on the same principles as YOLO but with a reduced number of parameters. It has only 9 convolutional layers, compared to YOLO's 24.

GStreamer Plugin

The GStreamer plugin uses the pre-process and post-process described in the original paper. Please take into consideration that not all deep neural networks are trained the same even if they use the same model architecture. If the model is trained differently, details like label ordering, input dimensions, and color normalization can change.

This element was based on the ncappzoo repo. The pre-trained model used to test the element may be downloaded from our R2I Model Zoo for the different frameworks.

Properties

| Property | Value | Description |

|---|---|---|

| backend | Enum (0,1) |

|

| model-location | String | Path to the model to use |

| object-threshold | Double [0,1] | Objectness threshold |

| probability-threshold | Double [0,1] | Class probability threshold |

| iou-threshold | Double [0,1] | Intersection over union threshold to merge similar boxes |

Pre-process

Input parameters:

- Input size: 416 x 416

- Format RGB

The pre-process consists of taking the input image and transforming it to the input size (by scaling, interpolation, cropping...) and dividing each pixel channel value by 255.

Post-process

Output parameters:

- Grid dimensions: 13x13

- Grid cell dimensions: 32x32 px

- Number of classes: 20

- Labels: ["aeroplane", "bicycle", "bird", "boat", "bottle", "bus", "car", "cat", "chair", "cow", "diningtable", "dog", "horse", "motorbike", "person", "pottedplant", "sheep", "sofa", "train", "tvmonitor"]

- Anchors: [1.08, 1.19, 3.42, 4.41, 6.63, 11.38, 9.42, 5.11, 16.62, 10.52]

- Number of boxes per cell: 5

- Box dim: 4 [x_center, y_center, width, height]

- Objectness threshold: 0.08 (configurable)

- Probability threshold: 0.08 (configurable)

- Intersection over union threshold: 0.30 (configurable)

The post-process of the YOLO network is a lot more complex than the pre-process:

- Convert the tensor to have shape = 13x13x5x25 = grid_cells x n_boxes_in_each_cell x n_predictions_for_each_box

- The 25 predictions are: 2 coordinates and 2 shape values (x,y,h,w), 1 Objectness score, 20 Class scores

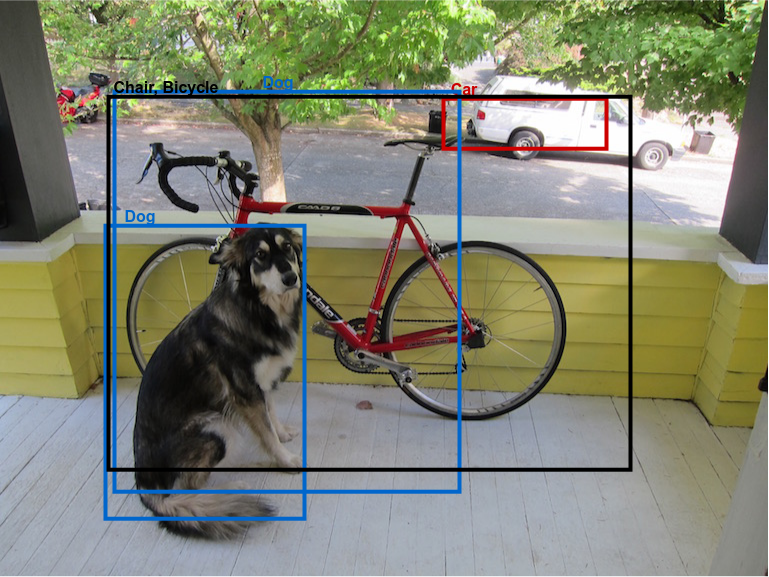

Each resulting box needs to be scale by an anchor and the grid size. The post-process considers only probabilities that surpass the user-defined objectness threshold (0.08) and class probability threshold (0.08). Then it extracts the boxes from the final part of the array corresponding to the probabilities above the threshold. An example result can be seen in the following image:

Now an algorithm is needed to remove or merge duplicated boxes. We use the intersection over union metric to determine that 2 boxes that surpass a configurable intersection over union threshold (0.35) on this metric are considered the same object. Then, we keep the object with the highest probability and remove the other one. The final result can be seen in the following figure:

Examples

Please refer to the TinyYOLO section on the examples page.

References

- ↑ J. Redmon, S. Divvala, R. Girshick, and A. Farhadi, “You Only Look Once: Unified, Real-Time Object Detection”, in proceedings of the IEEE conference on computer vision and pattern recognition, pp. 779–788, 2016.