GstInference with InceptionV2 layer

Make sure you also check GstInference's companion project: R2Inference |

| GstInference |

|---|

|

| Introduction |

| Getting started |

| Supported architectures |

|

InceptionV1 InceptionV3 YoloV2 AlexNet |

| Supported backends |

|

Caffe |

| Metadata and Signals |

| Overlay Elements |

| Utils Elements |

| Legacy pipelines |

| Example pipelines |

| Example applications |

| Benchmarks |

| Model Zoo |

| Project Status |

| Contact Us |

|

Description

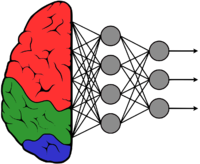

GoogLeNet is an image classification convolutional neural network. It was designed to participate in the ImageNet challenge, a competition where research teams evaluate classification algorithms on the ImageNet data set and compete to achieve higher accuracy. ImageNet is a collection of hand-labeled images from 1000 distinct categories. GoogLeNet actually won the ImageNet challenge in 2014 using twelve times fewer parameters than the previous winner. They achieve this by incorporating computer vision concepts on the "inception layer".

Architecture

The network uses LeNet CNN as a base but incorporates a novel element on the inception layer. The inception layer uses several very small convolutions in order to reduce the number of parameters. The architecture consists of 9 inception layers for a 22 layer deep CNN.

GStreamer Plugin

The GStreamer plugin uses the pre-process and post-process described in the original paper. Please take into consideration that not all deep neural networks are trained the same even if they use the same model architecture. If the model is trained differently, details like label ordering, input dimensions, and color normalization can change.

This element was based on the ncappzoo repo. The pre-trained model used to test the element may be downloaded from our R2I Model Zoo for the different frameworks.

Pre-process

Input parameters:

- Input size: 224 x 224

- RGB Mean: [0.40787054 * 255, 0.45752458 * 255, 0.48109378 * 255]

- Format BGR

The pre-process consists of taking the input image and transforming it to the input size (by scaling, interpolation, cropping...). Then the mean is subtracted from each pixel on RGB. Finally, conversion to BGR is performed by inverting the order of the channels.

Post-process

The model output is a float array of size 1000 containing the probability for each one of the ImageNet labels. The post-process consists of simply searching the highest probability on the array.

Examples

Please refer to the Googlenet section on the examples page.

References

- ↑ C. Szegedy, W. Liu, Y. Jia, P. Sermanet, S. Reed, D. Anguelov, D. Erhan, V. Vanhoucke, and A. Rabinovich, “Going deeper with convolutions”, in proceedings of the IEEE conference on computer vision and pattern recognition, pp. 1–9, 2015