Line 1,139:

Line 1,139: == TensorRT Benchmarks ==

== TensorRT Benchmarks ==

=== FPS Measurements ===

<html>

<style>

.button {

background-color: #008CBA;

border: none;

color: white;

padding: 15px 32px;

text-align: center;

text-decoration: none;

display: inline-block;

font-size: 16px;

margin: 4px 2px;

cursor: pointer;

}

</style>

<div id="Buttons_Model_Tensorrt_Fps" style="margin: auto; width: 1300px; height: auto;">

<button class="button" id="show_inceptionv1_tensorrt_fps">Show InceptionV1 </button>

<button class="button" id="show_inceptionv2_tensorrt_fps">Show InceptionV2 </button>

<button class="button" id="show_inceptionv3_tensorrt_fps">Show InceptionV3 </button>

<button class="button" id="show_inceptionv4_tensorrt_fps">Show InceptionV4 </button>

<button class="button" id="show_tinyyolov2_tensorrt_fps">Show TinyYoloV2 </button>

<button class="button" id="show_tinyyolov3_tensorrt_fps">Show TinyYoloV3 </button>

</div>

<br><br>

<div id="chart_tensorrtrt" style="margin: auto; width: 800px; height: 500px;"></div>

<br><br>

<div id="Buttons_Backend_TensorRT_Fps" style="margin: auto; width: 600px; height: auto;">

<button class="button" id="show_tensorrt_fps">Show TensorRT </button>

</div>

<div id="chart_tensorrt1" style="margin: auto; width: 800px; height: 500px;"></div>

<br><br>

<script>

google.charts.load('current', {'packages':['corechart', 'bar']});

google.charts.setOnLoadCallback(drawStuffFpsTensorRT);

function drawStuffFpsTensorRT() {

var tensorrt_chartDiv = document.getElementById('chart_tensorrt');

var tensorrt_chartDiv1 = document.getElementById('chart_tensorrt1');

var table_backend_platform_tensorrt_fps = google.visualization.arrayToDataTable([

['Platform', //Column 0

'InceptionV1 \n TensorRT', //Column 1

'InceptionV2 \n TensorRT', //Column 2

'InceptionV3 \n TensorRT', //Column 3

'InceptionV4 \n TensorRT', //Column 4

'TinyYoloV2 \n TensorRT', //Column 5

'TinyYoloV3 \n TensorRT'], //Column 6

['x86', 47.8702, 32.7236, 12.092, 5.2632, 16.03, 18.3592]

]);

var table_model_platform_tensorrt_fps = google.visualization.arrayToDataTable([

['Model', //Column 0

'TensorRT \n x86'], //Column 1

['InceptionV1', 47.8702], //row 1

['InceptionV2', 32.7236], //row 2

['InceptionV3', 12.092], //row 3

['InceptionV4', 5.2632], //row 4

['TinyYoloV2', 16.03], //row 5

['TinyYoloV3', 18.3592] //row 6

]);

var tensorrt_materialOptions = {

width: 320,

chart: {

title: 'Backend Vs Platform per model',

},

series: {

},

axes: {

y: {

distance: {side: 'left',label: 'FPS'}, // Left y-axis.

}

}

};

var tensorrt_materialOptions1 = {

width: 900,

chart: {

title: 'Model Vs Platform per backend',

},

series: {

},

axes: {

y: {

distance: {side: 'left',label: 'FPS'}, // Left y-axis.

}

}

};

var materialChart_tensorrt_fps = new google.charts.Bar(tensorrt_chartDiv);

var materialChart_tensorrt_fps1 = new google.charts.Bar(tensorrt_chartDiv1);

view_tensorrt_fps = new google.visualization.DataView(table_backend_platform_tensorrt_fps);

view_tensorrt_fps1 = new google.visualization.DataView(table_model_platform_tensorrt_fps);

function drawMaterialChart() {

var materialChart_tensorrt_fps = new google.charts.Bar(tensorrt_chartDiv);

var materialChart_tensorrt_fps1 = new google.charts.Bar(tensorrt_chartDiv1);

materialChart_tensorrt_fps.draw(table_backend_platform_tensorrt_fps, google.charts.Bar.convertOptions(tensorrt_materialOptions));

materialChart_tensorrt_fps1.draw(table_model_platform_tensorrt_fps, google.charts.Bar.convertOptions(tensorrt_materialOptions1));

init_charts();

}

function init_charts(){

view_tensorrt_fps.setColumns([0,1]);

view_tensorrt_fps.hideColumns([2, 3, 4, 5, 6]);

materialChart_tensorrt_fps.draw(view_tensorrt_fps, tensorrt_materialOptions);

view_tensorrt_fps1.setColumns([0,1]);

materialChart_tensorrt_fps1.draw(view_tensorrt_fps1, tensorrt_materialOptions1);

}

// REF_MODEL

/*Select the Model that you want to show in the chart*/

var show_inceptionv1_tensorrt_fps = document.getElementById('show_inceptionv1_tensorrt_fps');

show_inceptionv1_tensorrt_fps.onclick = function () {

view_tensorrt_fps.setColumns([0,1]);

view_tensorrt_fps.hideColumns([2, 3, 4, 5, 6]);

materialChart_tensorrt_fps.draw(view_tensorrt_fps, tensorrt_materialOptions);

}

var show_inceptionv2_tensorrt_fps = document.getElementById('show_inceptionv2_tensorrt_fps');

show_inceptionv2_tensorrt_fps.onclick = function () {

view_tensorrt_fps.setColumns([0,2]);

view_tensorrt_fps.hideColumns([1, 3, 4, 5, 6]);

materialChart_tensorrt_fps.draw(view_tensorrt_fps, tensorrt_materialOptions);

}

var show_inceptionv3_tensorrt_fps = document.getElementById('show_inceptionv3_tensorrt_fps');

show_inceptionv3_tensorrt_fps.onclick = function () {

view_tensorrt_fps.setColumns([0,3]);

view_tensorrt_fps.hideColumns([1, 2, 4, 5, 6]);

materialChart_tensorrt_fps.draw(view_tensorrt_fps, tensorrt_materialOptions);

}

var show_inceptionv4_tensorrt_fps = document.getElementById('show_inceptionv4_tensorrt_fps');

show_inceptionv4_tensorrt_fps.onclick = function () {

view_tensorrt_fps.setColumns([0,4]);

view_tensorrt_fps.hideColumns([1, 2, 3, 5, 6]);

materialChart_tensorrt_fps.draw(view_tensorrt_fps, tensorrt_materialOptions);

}

var show_tinyyolov2_tensorrt_fps = document.getElementById('show_tinyyolov2_tensorrt_fps');

show_tinyyolov2_tensorrt_fps.onclick = function () {

view_tensorrt_fps.setColumns([0,5]);

view_tensorrt_fps.hideColumns([1, 2, 3, 4, 6]);

materialChart_tensorrt_fps.draw(view_tensorrt_fps, tensorrt_materialOptions);

}

var show_tinyyolov3_tensorrt_fps = document.getElementById('show_tinyyolov3_tensorrt_fps');

show_tinyyolov3_tensorrt_fps.onclick = function () {

view_tensorrt_fps.setColumns([0,6]);

view_tensorrt_fps.hideColumns([1, 2, 3, 4, 5]);

materialChart_tensorrt_fps.draw(view_tensorrt_fps, tensorrt_materialOptions);

}

// REF_BACKEND

/*Select the Model that you want to show in the chart*/

var show_tensorrt_fps = document.getElementById('show_tensorrt_fps');

show_tensorrt_fps.onclick = function () {

view_tensorrt_fps1.setColumns([0,1]);

materialChart_tensorrt_fps1.draw(view_tensorrt_fps1, tensorrt_materialOptions1);

}

drawMaterialChart();

};

</script>

</html>

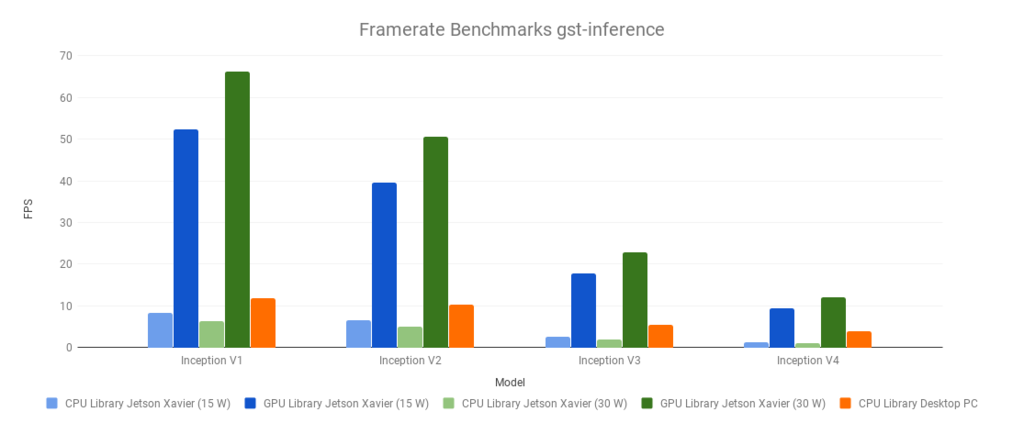

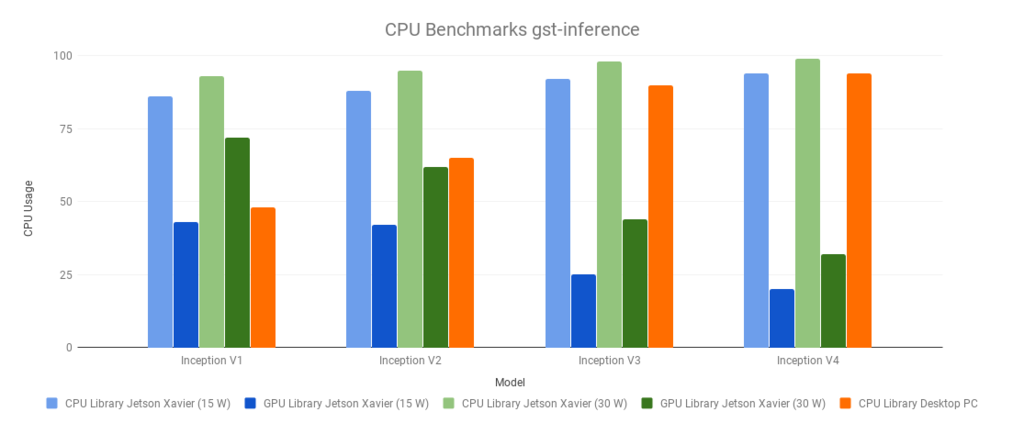

GstInference Benchmarks The following benchmarks were run with a source video (1920x1080@60). With the following base GStreamer pipeline, and environment variables:

$ VIDEO_FILE='video.mp4'

$ MODEL_LOCATION='graph_inceptionv1_tensorflow.pb'

$ INPUT_LAYER='input'

$ OUTPUT_LAYER='InceptionV1/Logits/Predictions/Reshape_1'

The environment variables were changed accordingly with the used model (Inception V1,V2,V3 or V4)

GST_DEBUG=inception1:1 gst-launch-1.0 filesrc location=$VIDEO_FILE ! decodebin ! videoconvert ! videoscale ! queue ! net.sink_model inceptionv1 name=net model-location=$MODEL_LOCATION backend=tensorflow backend::input-layer=$INPUT_LAYER backend::output-layer=$OUTPUT_LAYER net.src_model ! perf ! fakesink -v

The Desktop PC had the following specifications:

Intel(R) Core(TM) i7-3770 CPU @ 3.40GHz

8 GB RAM

Cedar [Radeon HD 5000/6000/7350/8350 Series]

Linux 4.15.0-54-generic x86_64 (Ubuntu 16.04) The Jetson Xavier power modes used were 2 and 6 (more information: Supported Modes and Power Efficiency )

$ sudo /usr/sbin/nvpmodel -q

Change current power mode: sudo /usr/sbin/nvpmodel -m x

Where x is the power mode ID (e.g. 0, 1, 2, 3, 4, 5, 6).

Summary

Desktop PC

CPU Library

Model

Framerate

CPU Usage

Inception V1

11.89

48

Inception V2

10.33

65

Inception V3

5.41

90

Inception V4

3.81

94

Jetson Xavier (15W)

CPU Library

GPU Library

Model

Framerate

CPU Usage

Framerate

CPU Usage

Inception V1

8.24

86

52.3

43

Inception V2

6.58

88

39.6

42

Inception V3

2.54

92

17.8

25

Inception V4

1.22

94

9.4

20

Jetson Xavier (30W)

CPU Library

GPU Library

Model

Framerate

CPU Usage

Framerate

CPU Usage

Inception V1

6.41

93

66.27

72

Inception V2

5.11

95

50.59

62

Inception V3

1.96

98

22.95

44

Inception V4

0.98

99

12.14

32

Framerate thumb CPU Usage thumb TensorFlow Lite Benchmarks FPS measurement

Show InceptionV1

Show InceptionV2

Show InceptionV3

Show InceptionV4

Show TinyYoloV2

Show TinyYoloV3

Show Tensorflow

Show Tensorflow-Lite

Show EdgeTPU

Show x86

Show X86+GPU

Show Tx2

Show TX2+GPU

Show Coral

Show Coral+TPU

CPU usage measurement

Show InceptionV1

Show InceptionV2

Show InceptionV3

Show InceptionV4

Show TinyYoloV2

Show TinyYoloV3

Show Tensorflow

Show Tensorflow-Lite

Show EdgeTPU

Show x86

Show X86+GPU

Show Tx2

Show TX2+GPU

Show Coral

Show Coral+TPU

Test benchmark video The following video was used to perform the benchmark tests.

https://developer.ridgerun.com/wiki/index.php/File:Test_benchmark_video.mp4 Test benchmark video ONNXRT Benchmarks The Desktop PC had the following specifications:

Intel(R) Core(TM) Core i7-7700HQ CPU @ 2.80GHz

12 GB RAM

Linux 4.15.0-106-generic x86_64 (Ubuntu 16.04)

GStreamer 1.8.3 The following was the GStreamer pipeline used to obtain the results:

# MODELS_PATH has the following structure

#/path/to/models/

#├── InceptionV1_onnxrt

#│ ├── graph_inceptionv1_info.txt

#│ ├── graph_inceptionv1.onnx

#│ └── labels.txt

#├── InceptionV2_onnxrt

#│ ├── graph_inceptionv2_info.txt

#│ ├── graph_inceptionv2.onnx

#│ └── labels.txt

#├── InceptionV3_onnxrt

#│ ├── graph_inceptionv3_info.txt

#│ ├── graph_inceptionv3.onnx

#│ └── labels.txt

#├── InceptionV4_onnxrt

#│ ├── graph_inceptionv4_info.txt

#│ ├── graph_inceptionv4.onnx

#│ └── labels.txt

#├── TinyYoloV2_onnxrt

#│ ├── graph_tinyyolov2_info.txt

#│ ├── graph_tinyyolov2.onnx

#│ └── labels.txt

#└── TinyYoloV3_onnxrt

# ├── graph_tinyyolov3_info.txt

# ├── graph_tinyyolov3.onnx

# └── labels.txt

model_array=(inceptionv1 inceptionv2 inceptionv3 inceptionv4 tinyyolov2 tinyyolov3)

model_upper_array=(InceptionV1 InceptionV2 InceptionV3 InceptionV4 TinyYoloV2 TinyYoloV3)

MODELS_PATH=/path/to/models/

INTERNAL_PATH=onnxrt

EXTENSION=".onnx"

gst-launch-1.0 \

filesrc location=$VIDEO_PATH num-buffers=600 ! decodebin ! videoconvert ! \

perf print-arm-load=true name=inputperf ! tee name=t t. ! videoscale ! queue ! net.sink_model t. ! queue ! net.sink_bypass \

${model_array[i]} backend=onnxrt name=net \

model-location="${MODELS_PATH}${model_upper_array[i]}_${INTERNAL_PATH}/graph_${model_array[i]}${EXTENSION}" \

net.src_bypass ! perf print-arm-load=true name=outputperf ! videoconvert ! fakesink sync=false

FPS Measurements

Show InceptionV1

Show InceptionV2

Show InceptionV3

Show InceptionV4

Show TinyYoloV2

Show TinyYoloV3

Show ONNXRT

CPU Load Measurements

Show InceptionV1

Show InceptionV2

Show InceptionV3

Show InceptionV4

Show TinyYoloV2

Show TinyYoloV3

Show ONNXRT

Test benchmark video The following video was used to perform the benchmark tests.

https://developer.ridgerun.com/wiki/index.php/File:Test_benchmark_video.mp4 Test benchmark video TensorRT Benchmarks