GstInference/Benchmarks: Difference between revisions

| Line 194: | Line 194: | ||

google.charts.load('current', {'packages':['corechart', 'bar']}); | google.charts.load('current', {'packages':['corechart', 'bar']}); | ||

google.charts.setOnLoadCallback(drawStuff); | google.charts.setOnLoadCallback(drawStuff); | ||

function drawStuff() { | function drawStuff() { | ||

| Line 205: | Line 205: | ||

var table_backend_platform_fps = google.visualization.arrayToDataTable([ | var table_backend_platform_fps = google.visualization.arrayToDataTable([ | ||

['Platform', | ['Platform', //Colunm 0 | ||

'InceptionV1 \n Tensorflow', | 'InceptionV1 \n Tensorflow', //Colunm 1 | ||

'InceptionV1 \n Tensorflow-Lite', | 'InceptionV1 \n Tensorflow-Lite',//Colunm 2 | ||

'InceptionV2 \n Tensorflow', | 'InceptionV2 \n Tensorflow', //Colunm 3 | ||

'InceptionV2 \n Tensorflow-Lite', | 'InceptionV2 \n Tensorflow-Lite',//Colunm 4 | ||

'InceptionV3 \n Tensorflow', | 'InceptionV3 \n Tensorflow', //Colunm 5 | ||

'InceptionV3 \n Tensorflow-Lite', | 'InceptionV3 \n Tensorflow-Lite',//Colunm 6 | ||

'InceptionV4 \n Tensorflow', | 'InceptionV4 \n Tensorflow', //Colunm 7 | ||

'InceptionV4 \n Tensorflow-Lite', | 'InceptionV4 \n Tensorflow-Lite',//Colunm 8 | ||

'TinyYoloV2 \n Tensorflow', | 'TinyYoloV2 \n Tensorflow', //Colunm 9 | ||

'TinyYoloV2 \n Tensorflow-Lite', | 'TinyYoloV2 \n Tensorflow-Lite', //Colunm 10 | ||

'TinyYoloV3 \n Tensorflow', | 'TinyYoloV3 \n Tensorflow', //Colunm 11 | ||

'TinyYoloV3 \n Tensorflow-Lite'], | 'TinyYoloV3 \n Tensorflow-Lite'],//Colunm 12 | ||

['x86', 63.747, 22.781, 48.381, 14.164, 20.482, 12.164, 10.338, 10.164, 24.255, 12.164, 27.113, 10.164], | ['x86', 63.747, 22.781, 48.381, 14.164, 20.482, 12.164, 10.338, 10.164, 24.255, 12.164, 27.113, 10.164], | ||

['x86+GPU', 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0], | ['x86+GPU', 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0], //row 1 | ||

['TX2', 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0], | ['TX2', 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0], //row 2 | ||

['TX2-GPU', 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0], | ['TX2-GPU', 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0], //row 3 | ||

['Coral', 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0], | ['Coral', 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0], //row 4 | ||

['Coral+TPU', 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0] | ['Coral+TPU', 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0] //row 5 | ||

]); | ]); | ||

var table_model_platform_fps = google.visualization.arrayToDataTable([ | var table_model_platform_fps = google.visualization.arrayToDataTable([ | ||

['Model', | ['Model', //Colunm 0 | ||

'Tensorflow \n x86', //Colunm 1 | |||

'Tensorflow \n x86+GPU', //Colunm 2 | |||

'Tensorflow \n TX2', //Colunm 3 | |||

'Tensorflow \n TX2+GPU', //Colunm 4 | |||

'Tensorflow \n Coral', //Colunm 5 | |||

'Tensorflow \n Coral+TPU', //Colunm 6 | |||

'Tensorflow-Lite \n x86', //Colunm 7 | |||

'Tensorflow-Lite \n x86+GPU', //Colunm 8 | |||

'Tensorflow-Lite \n TX2', //Colunm 9 | |||

'Tensorflow-Lite \n TX2+GPU', //Colunm 10 | |||

'Tensorflow-Lite \n Coral', //Colunm 11 | |||

'Tensorflow-Lite \n Coral+TPU'],//Colunm 12 | |||

['InceptionV1', 63.747, 0, 0, 0, 0, 0, 22.781, 0, 0, 0, 0, 0], | ['InceptionV1', 63.747, 0, 0, 0, 0, 0, 22.781, 0, 0, 0, 0, 0], //row 1 | ||

['InceptionV2', 48.381, 0, 0, 0, 0, 0, 14.164, 0, 0, 0, 0, 0], | ['InceptionV2', 48.381, 0, 0, 0, 0, 0, 14.164, 0, 0, 0, 0, 0], //row 2 | ||

['InceptionV3', 20.482, 0, 0, 0, 0, 0, 12.164, 0, 0, 0, 0, 0], | ['InceptionV3', 20.482, 0, 0, 0, 0, 0, 12.164, 0, 0, 0, 0, 0], //row 3 | ||

['InceptionV4', 10.338, 0, 0, 0, 0, 0, 10.164, 0, 0, 0, 0, 0], | ['InceptionV4', 10.338, 0, 0, 0, 0, 0, 10.164, 0, 0, 0, 0, 0], //row 4 | ||

['TinyYoloV2', 24.255, 0, 0, 0, 0, 0, 12.164, 0, 0, 0, 0, 0], | ['TinyYoloV2', 24.255, 0, 0, 0, 0, 0, 12.164, 0, 0, 0, 0, 0], //row 5 | ||

['TinyYoloV3', 27.113, 0, 0, 0, 0, 0, 10.164, 0, 0, 0, 0, 0] | ['TinyYoloV3', 27.113, 0, 0, 0, 0, 0, 10.164, 0, 0, 0, 0, 0] //row 6 | ||

]); | ]); | ||

var table_model_backend_fps = google.visualization.arrayToDataTable([ | var table_model_backend_fps = google.visualization.arrayToDataTable([ | ||

['Model', | ['Model', //Colunm 0 | ||

'Tensorflow \n x86', | 'Tensorflow \n x86', //Colunm 1 | ||

'Tensorflow \n x86+GPU', | 'Tensorflow \n x86+GPU', //Colunm 2 | ||

'Tensorflow \n TX2', | 'Tensorflow \n TX2', //Colunm 3 | ||

'Tensorflow \n TX2+GPU', | 'Tensorflow \n TX2+GPU', //Colunm 4 | ||

'Tensorflow \n Coral', | 'Tensorflow \n Coral', //Colunm 5 | ||

'Tensorflow \n Coral+TPU', | 'Tensorflow \n Coral+TPU', //Colunm 6 | ||

'Tensorflow-Lite \n x86', | 'Tensorflow-Lite \n x86', //Colunm 7 | ||

'Tensorflow-Lite \n x86+GPU', | 'Tensorflow-Lite \n x86+GPU', //Colunm 8 | ||

'Tensorflow-Lite \n TX2', | 'Tensorflow-Lite \n TX2', //Colunm 9 | ||

'Tensorflow-Lite \n TX2+GPU', | 'Tensorflow-Lite \n TX2+GPU', //Colunm 10 | ||

'Tensorflow-Lite \n Coral', | 'Tensorflow-Lite \n Coral', //Colunm 11 | ||

'Tensorflow-Lite \n Coral+TPU'], | 'Tensorflow-Lite \n Coral+TPU'],//Colunm 12 | ||

['InceptionV1', 63.747, 0, 0, 0, 0, 0, 22.781, 0, 0, 0, 0, 0], | ['InceptionV1', 63.747, 0, 0, 0, 0, 0, 22.781, 0, 0, 0, 0, 0], //row 1 | ||

['InceptionV2', 48.381, 0, 0, 0, 0, 0, 14.164, 0, 0, 0, 0, 0], | ['InceptionV2', 48.381, 0, 0, 0, 0, 0, 14.164, 0, 0, 0, 0, 0], //row 2 | ||

['InceptionV3', 20.482, 0, 0, 0, 0, 0, 12.164, 0, 0, 0, 0, 0], | ['InceptionV3', 20.482, 0, 0, 0, 0, 0, 12.164, 0, 0, 0, 0, 0], //row 3 | ||

['InceptionV4', 10.338, 0, 0, 0, 0, 0, 10.164, 0, 0, 0, 0, 0], | ['InceptionV4', 10.338, 0, 0, 0, 0, 0, 10.164, 0, 0, 0, 0, 0], //row 4 | ||

['TinyYoloV2', 24.255, 0, 0, 0, 0, 0, 12.164, 0, 0, 0, 0, 0], | ['TinyYoloV2', 24.255, 0, 0, 0, 0, 0, 12.164, 0, 0, 0, 0, 0], //row 5 | ||

['TinyYoloV3', 27.113, 0, 0, 0, 0, 0, 10.164, 0, 0, 0, 0, 0] | ['TinyYoloV3', 27.113, 0, 0, 0, 0, 0, 10.164, 0, 0, 0, 0, 0] //row 6 | ||

]); | ]); | ||

| Line 271: | Line 271: | ||

width: 900, | width: 900, | ||

chart: { | chart: { | ||

title: ' | title: 'Model Vs Platform per backend', | ||

}, | }, | ||

series: { | series: { | ||

}, | }, | ||

axes: { | axes: { | ||

| Line 288: | Line 287: | ||

}, | }, | ||

series: { | series: { | ||

}, | }, | ||

axes: { | axes: { | ||

| Line 302: | Line 300: | ||

}, | }, | ||

series: { | series: { | ||

}, | }, | ||

axes: { | axes: { | ||

| Line 340: | Line 337: | ||

} | } | ||

// REF_MODEL | |||

/*Select the | /*Select the Model that you want to show in the chart*/ | ||

var show_inceptionv1 = document.getElementById('show_inceptionv1'); | var show_inceptionv1 = document.getElementById('show_inceptionv1'); | ||

show_inceptionv1.onclick = function () { | show_inceptionv1.onclick = function () { | ||

| Line 378: | Line 375: | ||

materialChart_fps.draw(view_fps, materialOptions); | materialChart_fps.draw(view_fps, materialOptions); | ||

} | } | ||

/* REF_BACKEND */ | |||

/*Select the backend to filter the data to show in the chart*/ | /*Select the backend to filter the data to show in the chart*/ | ||

var button = document.getElementById('show_tensorflow'); | var button = document.getElementById('show_tensorflow'); | ||

| Line 391: | Line 389: | ||

materialChart1_fps.draw(view1_fps, materialOptions1); | materialChart1_fps.draw(view1_fps, materialOptions1); | ||

} | } | ||

/* REF_PLATFORM */ | |||

/*Select the Platform to filter the data to show in the chart*/ | /*Select the Platform to filter the data to show in the chart*/ | ||

var show_x86 = document.getElementById('show_x86'); | var show_x86 = document.getElementById('show_x86'); | ||

| Line 428: | Line 427: | ||

materialChart2_fps.draw(view2_fps, materialOptions2); | materialChart2_fps.draw(view2_fps, materialOptions2); | ||

} | } | ||

drawMaterialChart(); | drawMaterialChart(); | ||

}; | }; | ||

</script> | </script> | ||

</html> | </html> | ||

| Line 477: | Line 464: | ||

<script> | <script> | ||

google.charts.load('current', {'packages':['corechart', 'bar']}); | |||

google.charts.setOnLoadCallback(drawStuff1); | google.charts.setOnLoadCallback(drawStuff1); | ||

| Line 559: | Line 546: | ||

}, | }, | ||

series: { | series: { | ||

}, | }, | ||

axes: { | axes: { | ||

| Line 573: | Line 559: | ||

}, | }, | ||

series: { | series: { | ||

}, | }, | ||

axes: { | axes: { | ||

| Line 587: | Line 572: | ||

}, | }, | ||

series: { | series: { | ||

}, | }, | ||

axes: { | axes: { | ||

| Line 626: | Line 610: | ||

/*Select the | // REF_MODEL CPU | ||

/*Select the Model that you want to show in the chart*/ | |||

var show_inceptionv1_cpu = document.getElementById('show_inceptionv1_cpu'); | var show_inceptionv1_cpu = document.getElementById('show_inceptionv1_cpu'); | ||

show_inceptionv1_cpu.onclick = function () { | show_inceptionv1_cpu.onclick = function () { | ||

| Line 663: | Line 648: | ||

materialChart.draw(view, materialOptions); | materialChart.draw(view, materialOptions); | ||

} | } | ||

/* REF_BACKEND CPU*/ | |||

/*Select the backend to filter the data to show in the chart*/ | /*Select the backend to filter the data to show in the chart*/ | ||

var button = document.getElementById('show_tensorflow_cpu'); | var button = document.getElementById('show_tensorflow_cpu'); | ||

| Line 676: | Line 662: | ||

materialChart1.draw(view1, materialOptions1); | materialChart1.draw(view1, materialOptions1); | ||

} | } | ||

/* REF_PLATFORM CPU*/ | |||

/*Select the Platform to filter the data to show in the chart*/ | /*Select the Platform to filter the data to show in the chart*/ | ||

var show_x86_cpu = document.getElementById('show_x86_cpu'); | var show_x86_cpu = document.getElementById('show_x86_cpu'); | ||

Revision as of 14:19, 13 April 2020

Make sure you also check GstInference's companion project: R2Inference |

| GstInference |

|---|

|

| Introduction |

| Getting started |

| Supported architectures |

|

InceptionV1 InceptionV3 YoloV2 AlexNet |

| Supported backends |

|

Caffe |

| Metadata and Signals |

| Overlay Elements |

| Utils Elements |

| Legacy pipelines |

| Example pipelines |

| Example applications |

| Benchmarks |

| Model Zoo |

| Project Status |

| Contact Us |

|

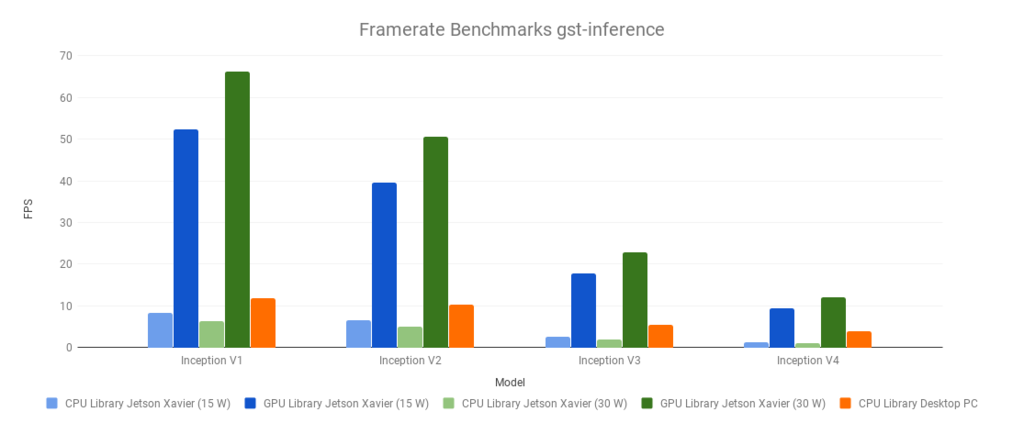

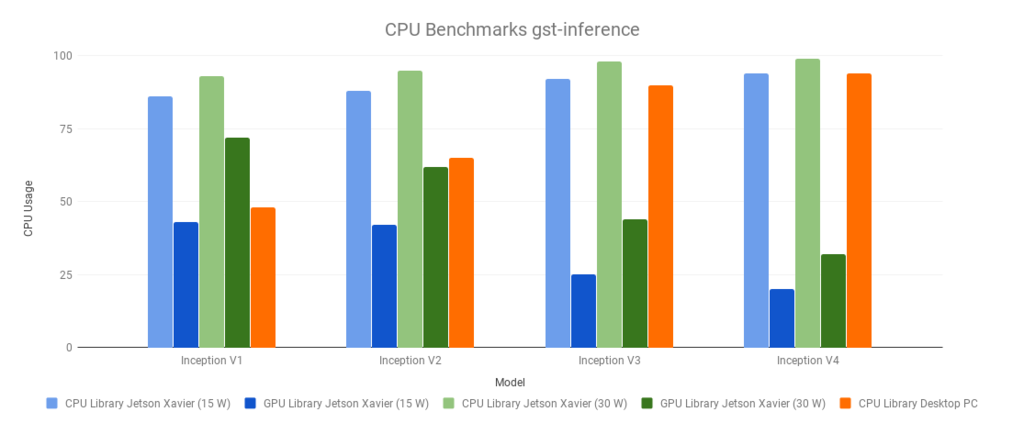

GstInference Benchmarks

The following benchmarks were run with a source video (1920x1080@60). With the following base GStreamer pipeline, and environment variables:

$ VIDEO_FILE='video.mp4' $ MODEL_LOCATION='graph_inceptionv1_tensorflow.pb' $ INPUT_LAYER='input' $ OUTPUT_LAYER='InceptionV1/Logits/Predictions/Reshape_1'

The environment variables were changed accordingly with the used model (Inception V1,V2,V3 or V4)

GST_DEBUG=inception1:1 gst-launch-1.0 filesrc location=$VIDEO_FILE ! decodebin ! videoconvert ! videoscale ! queue ! net.sink_model inceptionv1 name=net model-location=$MODEL_LOCATION backend=tensorflow backend::input-layer=$INPUT_LAYER backend::output-layer=$OUTPUT_LAYER net.src_model ! perf ! fakesink -v

The Desktop PC had the following specifications:

- Intel(R) Core(TM) i7-3770 CPU @ 3.40GHz

- 8 GB RAM

- Cedar [Radeon HD 5000/6000/7350/8350 Series]

- Linux 4.15.0-54-generic x86_64 (Ubuntu 16.04)

The Jetson Xavier power modes used were 2 and 6 (more information: Supported Modes and Power Efficiency)

- View current power mode:

$ sudo /usr/sbin/nvpmodel -q

- Change current power mode:

sudo /usr/sbin/nvpmodel -m x

Where x is the power mode ID (e.g. 0, 1, 2, 3, 4, 5, 6).

Summary

| Desktop PC | CPU Library | |

|---|---|---|

| Model | Framerate | CPU Usage |

| Inception V1 | 11.89 | 48 |

| Inception V2 | 10.33 | 65 |

| Inception V3 | 5.41 | 90 |

| Inception V4 | 3.81 | 94 |

| Jetson Xavier (15W) | CPU Library | GPU Library | ||

|---|---|---|---|---|

| Model | Framerate | CPU Usage | Framerate | CPU Usage |

| Inception V1 | 8.24 | 86 | 52.3 | 43 |

| Inception V2 | 6.58 | 88 | 39.6 | 42 |

| Inception V3 | 2.54 | 92 | 17.8 | 25 |

| Inception V4 | 1.22 | 94 | 9.4 | 20 |

| Jetson Xavier (30W) | CPU Library | GPU Library | ||

|---|---|---|---|---|

| Model | Framerate | CPU Usage | Framerate | CPU Usage |

| Inception V1 | 6.41 | 93 | 66.27 | 72 |

| Inception V2 | 5.11 | 95 | 50.59 | 62 |

| Inception V3 | 1.96 | 98 | 22.95 | 44 |

| Inception V4 | 0.98 | 99 | 12.14 | 32 |

Framerate

CPU Usage

TensorFlow Lite Benchmarks

FPS measurement

CPU usage measurement

Test benchmark video

The following video was used to perform the benchmark tests.

To download the video press right click on the video and select 'Save video as' and save this in your computer.