Building Low-Latency Video Streaming Pipelines

Low-latency video streaming on embedded systems is achieved by controlling the full path from camera capture to final display. The fastest practical workflow is to measure glass-to-glass latency first, identify in-pipeline backlog with GstShark, remove unnecessary copies and format conversions, keep live queues bounded, and tune the receiver as carefully as the sender. In most projects, use RTP/UDP for controlled endpoints, RTSP when you need standard client interoperability, and WebRTC when the receiver is a browser or an interactive application.

What low latency means in practice

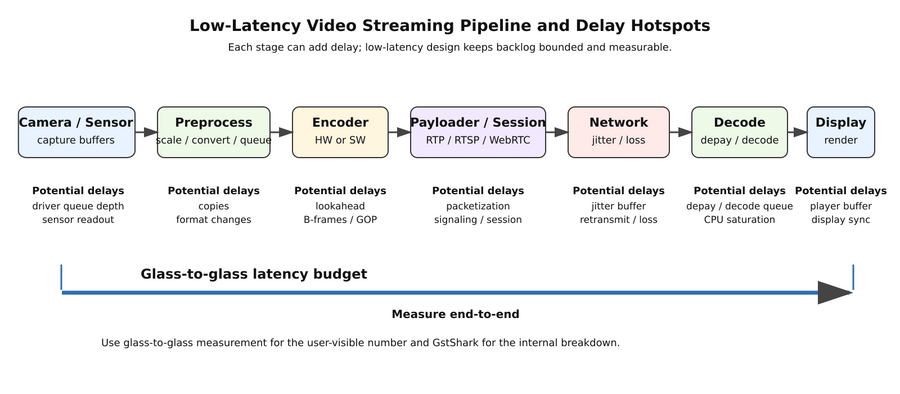

Low latency in embedded video means the time from a real-world event reaching the camera sensor to that event becoming visible or usable at the receiver. The total delay is the sum of capture delay, preprocessing delay, encoder delay, packetization or session delay, network delay, receiver buffering, decode delay, and display delay.

Consider the following pipeline:

Camera -> capture -> colorspace/scale -> encoder -> payloader/session -> network -> depay/receive -> decoder -> renderer/display

Main places where delay grows:

* extra memory copies * deep queues or non-leaky live branches * encoder lookahead / B-frames / large GOPs * jitter buffers and player buffering * display synchronization at the receiver

Latency budget

A useful engineering model is:

Total latency ≈ capture + preprocess + encode + packetize/session + network/jitter +

depacketize + decode + render/display

This model matters because transport is only one term in the equation. A system can use a low-overhead transport and still feel slow because the capture path, encoder, receiver, or player is buffering too aggressively.

Typical delay hotspots

In embedded GStreamer systems, delay usually appears in a few predictable places:

- sensor capture and driver buffering

- CPU color conversion or unnecessary format conversion

- copies between non-accelerated and accelerated memory domains

- encoder settings optimized for compression ratio instead of immediacy

- queue elements that absorb bursts but also hide backlog

- network jitter buffers and player defaults

A good low-latency design does not remove all buffering. It keeps only the buffering required for stability and makes every remaining queue observable.

Common anti-patterns

The same problems appear repeatedly in low-latency projects:

- measuring only sender-side FPS and assuming latency is solved

- tuning the sender while leaving the receiver at default buffering values

- adding deeper queues before checking where the backlog starts

- using software color conversion when a hardware path exists

- enabling encoder features intended for quality or compression, not immediacy

How to measure latency in embedded systems

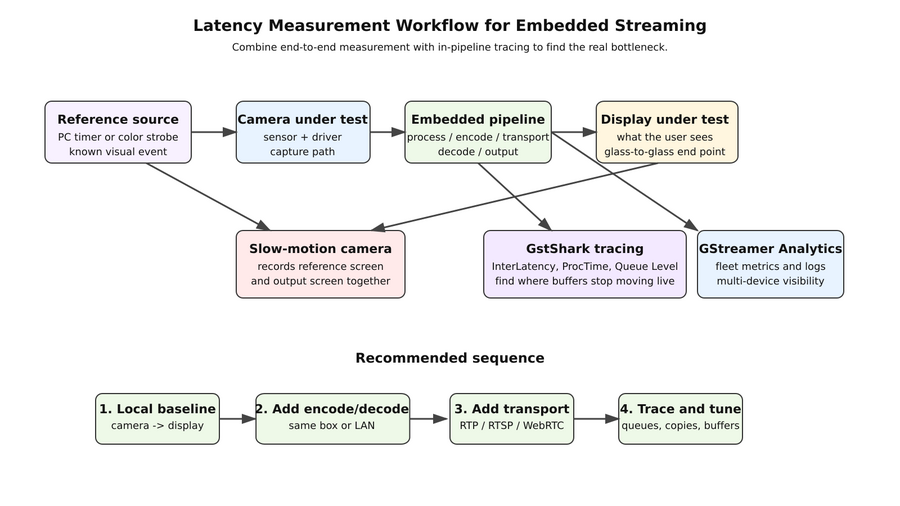

The fastest way to improve a pipeline is to separate end-to-end latency from in-pipeline latency. End-to-end measurement tells you what the user experiences. In-pipeline measurement tells you which element or stage is responsible.

Recommended measurement workflow

- Capture a reproducible glass-to-glass number.

- Trace the pipeline with GstShark.

- Record sender, receiver, network, and display settings.

- Change one variable at a time.

- Re-measure under the same test conditions.

Glass-to-glass latency

If the question is "How much delay does the user actually see?", glass-to-glass latency is the right metric. RidgeRun's Jetson glass-to-glass latency guide documents two practical methods for doing this on real hardware.[1]

Slow-motion forwarding frames method

This is the best default method for most teams because it is inexpensive and repeatable.

- Display a high-resolution timer or color strobe on a reference screen.

- Point the camera under test at that screen.

- Show the captured output on a second screen.

- Record both screens with a slow-motion camera, ideally 240 fps or higher.

- Advance the recorded clip frame by frame and count how many frames it takes for a change on the reference screen to appear on the output screen.

- Multiply the frame count by the slow-motion camera frame time.

At 240 fps, one frame is 4.167 ms. If a change appears 12 frames later, the measured glass-to-glass latency is about 50 ms, with an uncertainty roughly equal to one slow-motion frame.

A useful terminal timer from the RidgeRun Jetson page is:

while true; do echo -ne "`date +%H:%M:%S:%N`\r"; done

Sub-frame resolution method

If you need better than slow-motion-camera resolution, use a controlled light event and a fast optical sensor. RidgeRun's Jetson guide describes a setup using an Arduino, an LED, and a photodiode-based detector. This method requires more hardware effort, but it is a better fit for precise characterization and for systems where a single video frame is already too coarse.

| Platform / path | Resolution / rate | Reported glass-to-glass latency |

|---|---|---|

Jetson TX2 using nvarguscamerasrc ! nvoverlaysink |

1080p30 | 73.2 ms |

Jetson Xavier AGX using nvarguscamerasrc ! nvoverlaysink |

1080p30 | 103.3 ms |

Jetson Nano using nvarguscamerasrc ! nv3dsink |

720p60 | 96 ms |

These figures are useful as local-display baselines, not as universal targets. They show that a meaningful part of the budget may already be spent before a packet ever leaves the device.

In-pipeline latency with GstShark

If glass-to-glass numbers are too high, the next question is "Which stage is adding the time?" RidgeRun's GstShark is designed to answer exactly that question. Its InterLatency tracer measures how long a buffer takes to travel from the source toward downstream elements, and its Processing Time tracer helps reveal which filter-like elements are taking too long to produce output.[2][3]

A minimal InterLatency example from the RidgeRun documentation is:

GST_DEBUG="GST_TRACER:7" GST_TRACERS="interlatency" \ gst-launch-1.0 videotestsrc ! queue ! videorate max-rate=15 ! fakesink sync=true

For practical low-latency work, use the same tracer strategy on your real capture, encode, transport, and decode path. The goal is not just to collect trace files; it is to find the stage where buffers stop moving at live speed.

Continuous monitoring with GStreamer Analytics

Once the pipeline works on one bench setup, the next problem is keeping it healthy across devices and over time. RidgeRun GStreamer Analytics Overview is useful at this stage because it centralizes metrics, resource usage, process behavior, and pipeline logs from systems running GStreamer pipelines.[4]

That makes it a good companion to one-off tracing: use GstShark when you need to understand a bottleneck in detail, and use RidgeRun GStreamer Analytics when you need operational visibility across many runs or many devices.

Latency optimization techniques

The most reliable way to reduce latency is to remove old frames from the system before they pile up. Low-latency tuning is therefore a mix of throughput optimization and backlog prevention.

Reduce copies and format conversions

Every copy or format conversion in the live path consumes time and memory bandwidth. Embedded GStreamer Performance Tuning shows the general pattern clearly: avoid unnecessary copies, use hardware-friendly memory paths, and prefer encoder settings that reduce per-frame work.[5]

Examples from RidgeRun's tuning guide include properties such as always-copy=false on the source path, speed-oriented encoder settings like encodingpreset=2 and single-nalu=true, and disabling unnecessary sink-side buffer retention with enable-last-buffer=false. Even if your exact element names differ on a modern SoC, the transferable lesson is the same: reduce memory movement and reduce avoidable work.

Tune queues for live traffic

Queues are necessary, but hidden backlog is one of the main causes of "mysterious" latency growth. For live video:

- keep queue depths intentionally small

- use leaky behavior on branches where "latest frame wins" is preferable to "never drop"

- inspect queue growth with tracing instead of guessing

- add buffers only when traces prove that a source or driver is starving

RidgeRun's performance tuning guide also provides an important nuance: increasing a source queue or buffer pool can improve throughput stability when the driver cannot recycle buffers quickly enough. That is a throughput fix, not a universal low-latency rule. Use it only when measurement shows starvation, and verify that the change did not simply move the backlog elsewhere.

Use live-oriented encoder settings

For live interactive pipelines, prefer encoder configurations that reduce lookahead and keep frames moving:

- latency-oriented presets

- short GOP or keyframe interval appropriate to the use case

- no unnecessary B-frames

- hardware encoders where available

- bounded bitrate and buffering consistent with the network path

The exact properties are encoder-specific, but the goal is always the same: produce decodable frames quickly and predictably.

Control receiver buffering

A pipeline can be carefully tuned on the sender side and still look slow because the receiver client buffers too much. RidgeRun's GstRtspSink - VLC - Modify Streaming Buffer documentation notes that VLC can add about one second of buffering by default. That is large enough to dominate the end-to-end result.[6]

So the receiver is part of the latency budget. Always record:

- receiver type

- jitter buffer settings

- player buffering settings

- sink synchronization settings

If you do not document the receiver configuration, your latency number is incomplete.

Re-measure after each change

Do not batch many changes and measure once. A strong workflow is:

- change one variable

- repeat the same test conditions

- capture glass-to-glass latency

- inspect InterLatency and Processing Time traces

- decide whether the change improved responsiveness, stability, both, or neither

This method is slower than guessing, but it converges much faster than intuition alone.

GStreamer pipelines for low-latency streaming

For low latency, start with the smallest working live pipeline, verify it locally, and only then add control protocols, signaling, or browser integration. The sections below provide practical starting points and RidgeRun-specific examples that can be adapted to the target platform.

RTP over UDP baseline

If both endpoints are under your control and you want the leanest transport path, start with RTP over UDP. It gives you a clean baseline before adding RTSP session control or WebRTC signaling and NAT traversal.

# Sender HOST=192.168.1.10 PORT=5004 gst-launch-1.0 -e \ v4l2src device=/dev/video0 ! video/x-raw,width=1280,height=720,framerate=30/1 ! \ queue leaky=downstream max-size-buffers=4 ! videoconvert ! \ x264enc tune=zerolatency speed-preset=ultrafast key-int-max=30 bitrate=4000 ! \ h264parse config-interval=-1 ! rtph264pay pt=96 ! \ udpsink host=$HOST port=$PORT sync=false async=false

# Receiver PORT=5004 gst-launch-1.0 -e \ udpsrc port=$PORT caps="application/x-rtp,media=video,encoding-name=H264,payload=96,clock-rate=90000" ! \ rtpjitterbuffer latency=10 drop-on-latency=true ! \ rtph264depay ! h264parse ! avdec_h264 ! \ queue max-size-buffers=2 leaky=downstream ! autovideosink sync=false

On NVIDIA-based systems, a common next step is to replace software conversion and encoding with platform elements such as nvvidconv and nvv4l2h264enc. On other SoCs, the same principle applies: keep the live path as close as possible to the platform's zero-copy and hardware-accelerated path.

RTSP with RidgeRun GstRtspSink

If the question is "How do I expose a standard network stream that cameras, media clients, and NVR-like systems can consume?", RTSP is usually the better answer. GstRtspSink wraps RTSP/RTP serving as a GStreamer sink element, which keeps the pipeline design explicit and easy to prototype with gst-launch-1.0.[7]

A practical RidgeRun example combines H.264 video and AAC audio into a single mapping:

PORT=12345

MAPPING=/stream

gst-launch-1.0 rtspsink name=sink service=$PORT \

v4l2src ! queue ! videoconvert ! x264enc tune=zerolatency ! h264parse ! \

capsfilter caps="video/x-h264, mapping=${MAPPING}" ! sink. \

alsasrc ! voaacenc ! aacparse ! \

capsfilter caps="audio/mpeg, mapping=${MAPPING}" ! sink.

A matching GStreamer client is:

IP_ADDRESS=127.0.0.1

PORT=12345

MAPPING=stream

gst-launch-1.0 rtspsrc location=rtsp://${IP_ADDRESS}:${PORT}/${MAPPING} name=src \

src. ! rtph264depay ! h264parse ! avdec_h264 ! queue ! autovideosink \

src. ! rtpmp4adepay ! aacparse ! avdec_aac ! queue ! autoaudiosink

Important design details from the RidgeRun GstRtspSink documentation:

- Each branch is attached to

sink.using standard request-pad notation. - A mapping is assigned through negotiated caps such as

mapping=/videoormapping=/audiovideo. - Audio and video branches become one synchronized A/V stream when they share the same mapping.

- The RTSP service defaults to TCP port 554, so development pipelines usually use a higher, unprivileged port such as 3000 or 12345.

This makes GstRtspSink a strong fit when you want explicit server-side control, multiple named streams, or standard RTSP client compatibility.

RTP with bandwidth estimation

When network conditions vary, a pipeline that was locally fast can become unstable or start buffering. RidgeRun's Network Congestion Control element GstRTPNetCC is relevant here because it estimates available bandwidth from RTP information and is designed to sit in the RTP path.[8]

RidgeRun's basic usage example shows the element in the RTP chain like this:

gst-launch-1.0 ... ! rtph264pay ! rtpnetcc ! rtph264depay ! ...

The same documentation also shows the equivalent VP8 pattern:

gst-launch-1.0 ... ! rtpvp8pay ! rtpnetcc ! rtpvp8depay ! ...

Use this approach when raw RTP is the right transport but the network path is variable enough that passive buffering is no longer acceptable.

RidgeRun tools that fit this workflow

The most relevant RidgeRun projects and products for low-latency streaming are:

- GstRtspSink for RTSP/RTP serving with mappings, multicast, authentication, and HTTP tunneling

- GstRTPNetCC for RTP bandwidth estimation in variable network conditions

- GstShark for tracing latency, processing time, queue levels, and other pipeline behavior

- RidgeRun GStreamer Analytics for centralized monitoring and analysis of running GStreamer systems

- Reducing audio video streaming latency for older but still useful latency background and troubleshooting guidance

- RidgeRun Low Latency Network Kit - GStreamer pipelines when packet transport performance is the primary bottleneck

Validation checklist

Before reporting that a pipeline is "low latency", make sure you captured all of the following:

- camera source, resolution, framerate, and sensor mode

- sender pipeline and encoder settings

- transport protocol and network conditions

- receiver application and buffering configuration

- display sink synchronization settings

- glass-to-glass latency

- in-pipeline latency traces

- CPU, GPU, memory, and bandwidth observations when relevant

Key Takeaways

- Low latency is a system property, not a protocol checkbox.

- Measure glass-to-glass delay for user-visible performance, and measure in-pipeline delay for root-cause analysis.

- RTP is the cleanest baseline, RTSP is the better fit for standard device-style streaming, and WebRTC is the better fit for interactive and browser-facing delivery.

- Keep live queues bounded and observable.

- Optimize memory movement before chasing micro-optimizations in the transport.

- Document the receiver and player configuration, because client buffering can dominate the result.

FAQ

- What is the fastest way to reduce latency in a GStreamer video pipeline?

- Measure first, then remove unnecessary copies and format conversions, bound live queues, use encoder settings that avoid lookahead, and tune the receiver as carefully as the sender.

- How should I measure glass-to-glass latency on an embedded device?

- Start with a slow-motion camera method because it is inexpensive and repeatable. If you need better than one video-frame resolution, use a controlled light event and an optical sensor.

- Should I use RTP, RTSP, or WebRTC for low latency?

- Use RTP when you control both endpoints and want the leanest path, RTSP when interoperability with standard clients matters, and WebRTC when the receiver is a browser or an interactive application.

- Why is the viewer still slow after the sender is tuned?

- Because the receiver may still be buffering aggressively. Player defaults, jitter buffers, decode scheduling, and display synchronization can add more delay than the sender pipeline itself.

- When do larger queues help instead of hurt?

- Larger queues help only when measurement shows starvation or driver recycling problems. Otherwise, they often hide backlog and increase end-to-end delay.

- When should I use GstShark, GstRTPNetCC, and GStreamer Analytics?

- Use GstShark to find where latency is accumulating inside a pipeline, GstRTPNetCC when the RTP network path is variable, and GStreamer Analytics when you need ongoing visibility across devices or repeated runs.

Related RidgeRun pages

- RidgeRun Developer Wiki Main Page

- GstRtspSink

- GstRtspSink - Basic usage

- GstRtspSink - Audio+Video Streaming

- GStreamer WebRTC Wrapper

- GstWebRTC Pipelines

- Introduction to RidgeRun's GstWebRTC

- GstRTPNetCC

- GstRTPNetCC Basic Usage

- GstShark

- GstShark - InterLatency tracer

- GstShark - Processing Time tracer

- Jetson glass-to-glass latency

- Embedded GStreamer Performance Tuning

- Reducing audio video streaming latency

- RidgeRun Low Latency Network Kit - GStreamer pipelines

- RidgeRun GStreamer Analytics Overview

- RidgeRun Multimedia Streaming Solutions

External references and standards

- GStreamer RTP and RTSP support

- GStreamer RTSP server documentation

- RFC 3550 - RTP: A Transport Protocol for Real-Time Applications

- WebRTC 1.0: Real-Time Communication Between Browsers

- Improving Latency on the Holoscan Sensor Bridge with CUDA ISP

- RidgeRun GStreamer Development & Consulting Services

References

For direct inquiries, please refer to the contact information available on our Contact page. Alternatively, you may complete and submit the form provided at the same link. We will respond to your request at our earliest opportunity.

Links to RidgeRun Resources and RidgeRun Artificial Intelligence Solutions can be found in the footer below.