Jetson Glass-to-Glass Latency: Performance Optimization Guide

![]() Problems running the pipelines shown on this page? Please see our GStreamer Debugging guide for help.

Problems running the pipelines shown on this page? Please see our GStreamer Debugging guide for help.

Introduction to Jetson Glass to Glass Latency

This wiki is intended to be used as a reference for the Jetson platforms capture to display glass to glass latency using the simplest GStreamer pipeline. The tests were executed with the following camera sensors:

- IMX274 on TX1 for the 1080p and 4K 60fps modes, JetPack 3.0.

- IMX274 on TX2 for the 1080p@30fps, 1080p@60fps and 4K@60fps modes, JetPack 3.3.

- OV5693 on TX2 for the 1080p 30fps mode, JetPack 4.2.

- OV5693 on Xavier AGX for the 1080p 30fps mode, JetPack 4.5.1.

- IMX219 on Nano for the 1080p@30fps and 720p@60fps mode, JetPack 4.6.3.

Jetson TX1 Latency Test

The tests were done using a modified nvcamerasrc binary provided by NVIDIA, that reduces the minimum allowed value of the queue-size property from 10 to 2 buffers. This binary was built for Jetpack 3.0 L4T 24.2.1.

Note: The reported values are the average of 10 samples. |

1080p 60fps glass to glass latency

Glass to glass latency test for 1080p 60fps IMX274 camera mode.

- It is strictly necessary to first run the following command on the TX1 before running the test pipeline:

sudo ~/jetson_clocks.sh

Test pipeline:

gst-launch-1.0 nvcamerasrc queue-size=6 sensor-id=0 fpsRange='60 60' ! \ 'video/x-raw(memory:NVMM), width=1920, height=1080,format=I420,framerate=60/1' ! \ perf print-arm-load=true ! nvoverlaysink sync=true enable-last-sample=false

- Glass to glass latency = 43 ms

4K 60fps glass to glass latency

Glass to glass latency test for 4K 60fps IMX274 camera mode.

- It is strictly necessary to first run the following command on the TX1 before running the test pipeline:

sudo ~/jetson_clocks.sh

Test pipeline:

gst-launch-1.0 nvcamerasrc queue-size=6 sensor-id=0 fpsRange='60 60' ! \ 'video/x-raw(memory:NVMM), width=3840, height=2160,format=I420,framerate=60/1' ! \ perf print-arm-load=true ! nvoverlaysink sync=true enable-last-sample=false

- Glass to glass latency = 43 ms

Jetson TX2 Latency Test

JetPack 4.2

The below test was done by using nvarguscamerasrc capture GStreamer plugin for Jetpack 4.2. and the Jetson Devkit onboard OV5693 camera. The reported values are the average of 10 samples.

1080p 30fps glass to glass latency

Glass to glass latency test for 1080p 30fps OV5693 camera mode.

- You have to run the following command to enable the Max-N mode. Please find more information about this mode in RidgeRun's developer wiki on NVIDIA Jetson TX2 NVP model.

sudo nvpmodel -m 0

- After enable the Max-N mode you need to reboot.

- It is strictly necessary to first run the following command on the TX2 after reboot and before running the test pipeline:

sudo jetson_clocks

Test pipeline:

gst-launch-1.0 nvarguscamerasrc ! 'video/x-raw(memory:NVMM), width=(int)1920, height=(int)1080, format=(string)NV12, framerate=(fraction)30/1' ! nvoverlaysink

- Glass to glass latency = 73.2 ms

JetPack 3.3

The below test was done by using nvcamerasrc capture GStreamer plugin for Jetpack 3.3 and the IMX274 camera. The reported values are the average of 10 samples.

1080p 30fps glass to glass latency

Glass to glass latency test for 1080p 30fps IMX274 camera mode.

- You have to run the following command to enable the Max-N mode. Please find more information about this mode in RidgeRun's developer wiki on NVIDIA Jetson TX2 NVP model.

sudo nvpmodel -m 0

- After enable the Max-N mode you need to reboot.

- It is strictly necessary to first run the following command on the TX2 after reboot and before running the test pipeline:

sudo jetson_clocks

Test pipeline:

gst-launch-1.0 -v nvcamerasrc queue-size=10 sensor-id=1 fpsRange='30 30' ! "video/x-raw(memory:NVMM),width=1920,height=1080,format=I420,framerate=30/1" ! perf print-arm-load=true ! nvoverlaysink enable-last-sample=false

- Glass to glass latency = 60.8977412 ms

1080p 60fps glass to glass latency

Glass to glass latency test for 1080p 60fps IMX274 camera mode.

- You have to run the following command to enable the Max-N mode. Please find more information about this mode in RidgeRun's developer wiki on NVIDIA Jetson TX2 NVP model.

sudo nvpmodel -m 0

- After enable the Max-N mode you need to reboot.

- It is strictly necessary to first run the following command on the TX2 after reboot and before running the test pipeline:

sudo jetson_clocks

Test pipeline:

gst-launch-1.0 -v nvcamerasrc queue-size=10 sensor-id=1 fpsRange='60 60' ! "video/x-raw(memory:NVMM),width=1920,height=1080,format=I420,framerate=60/1" ! perf print-arm-load=true ! nvoverlaysink enable-last-sample=false

- Glass to glass latency = 121.480387 ms

4K 60fps glass to glass latency

Glass to glass latency test for 4K 60fps IMX274 camera mode.

- You have to run the following command to enable the Max-N mode. Please find more information about this mode in RidgeRun's developer wiki on NVIDIA Jetson TX2 NVP model.

sudo nvpmodel -m 0

- After enable the Max-N mode you need to reboot.

- It is strictly necessary to first run the following command on the TX2 after reboot and before running the test pipeline:

sudo jetson_clocks

Test pipeline:

gst-launch-1.0 -v nvcamerasrc queue-size=10 sensor-id=1 fpsRange='60 60' ! "video/x-raw(memory:NVMM),width=3840,height=2160,format=I420,framerate=60/1" ! perf print-arm-load=true ! nvoverlaysink enable-last-sample=false

- Glass to glass latency = 112.2042693 ms

Jetson Xavier AGX Latency Test

JetPack 4.5.1

The below test was done using Jetpack 4.5.1. and the Jetson Devkit onboard OV5693 camera with a Dell U2718Q monitor with around 9 ms of input lag. The reported values are the average of 10 samples.

Previous steps

- You have to run the following command to enable the Max-N mode.

sudo nvpmodel -m 0

- After enable the Max-N mode you need to reboot.

- It is strictly necessary to first run the following command on the Xavier after reboot and before running the test pipeline:

sudo jetson_clocks

720p 30fps - Jetson Multimedia API sample

Glass to glass latency test for 720p 30fps OV5693 camera mode.

- Test command

./camera_jpeg_capture -s --cap-time 1000 --fps 30 --disable-jpg --sensor-mode 2 --pre-res 1280x720

- Glass to glass latency = 95.83333333 ms

720p 60fps - Jetson Multimedia API sample

Glass to glass latency test for 720p 60fps OV5693 camera mode.

- Test command

./camera_jpeg_capture -s --cap-time 1000 --fps 60 --disable-jpg --sensor-mode 2 --pre-res 1280x720

Glass to glass latency = 72.5 ms

1080p 30fps - Jetson Multimedia API sample

Glass to glass latency test for 1080p 30fps OV5693 camera mode.

- Test command

./camera_jpeg_capture -s --cap-time 1000 --fps 30 --disable-jpg --sensor-mode 1 --pre-res 1920x1080

- Glass to glass latency = 116.6666667 ms

1080p 30fps - nvarguscamerasrc (GStreamer)

Glass to glass latency test for 1080p 30fps OV5693 camera mode.

- Test pipeline

gst-launch-1.0 nvarguscamerasrc ! 'video/x-raw(memory:NVMM), width=(int)1920, height=(int)1080, format=(string)NV12, framerate=(fraction)30/1' ! nvoverlaysink

- Glass to glass latency = 103.3333333 ms

Jetson Nano Latency Test

JetPack 4.6.3

1080p 30fps - nvarguscamerasrc (GStreamer)

Glass to glass latency test for 1080p 30fps IMX219 camera.

- Test pipeline

gst-launch-1.0 nvarguscamerasrc ! 'video/x-raw(memory:NVMM), width=(int)1920, height=(int)1080, format=(string)NV12, framerate=(fraction)30/1' ! nv3dsink

- Glass to glass latency = 98 ms

720p 60fps - nvarguscamerasrc (GStreamer)

Glass to glass latency test for 720p 60fps IMX219 camera.

- Test pipeline

gst-launch-1.0 nvarguscamerasrc ! 'video/x-raw(memory:NVMM), width=(int)1280, height=(int)720, format=(string)NV12, framerate=(fraction)60/1' ! nv3dsink

- Glass to glass latency = 96 ms

Glass to glass latency measurement reliable method

This wiki section is intended to explain two reliable methods to measure the glass to glass latency on a system.

The “industry standard” in measuring video latency is nothing short of terrible. It goes something like this: take a portable device, run some kind of stopwatch application on it, point the camera under testing to its screen, take a picture including both camera display and stopwatch, then subtract the difference. This method is very inaccurate because it depends on multiple factors.

As you can see, there are several elements here that can influence (timing) resolution: the freeware stopwatch not being written for the application (simply simulating a live, running stopwatch, and not actually implementing one with live output), the digital camera frame exposure time if the two stopwatches are not horizontally aligned (rolling shutter), the portable device display refresh rate, the FPV video system framerate, etc. All together those errors can easily exceed the actual latency of an analog FPV system, where glass to glass latency is usually around 20 milliseconds, a number that is not possible to measure when using the stopwatch method

The most critical factor is the refresh rate of the stopwatch. In most cases, we use an ms resolution online stopwatch or in the best case a good quality physical one. The majority of online stopwatches have a very poor refresh rate, which makes them useless to give a reliable and precise glass to glass latency measurement. Here in RidgeRun, we used to use this online stopwatch (http://stopwatch.onlineclock.net/). This stopwatch has a refresh rate of 43 ms, which is a high value that could lead us to errors in our measurements because we can't get a lower value than 43 ms in our measurements. On the other hand, there are the physical stopwatches, but to get one with 1 ms resolution a good refresh rate is so difficult and expensive.

On this wiki, you will find two reliable methods to measure glass to glass latency on a system.

What is latency?

Before measuring it, we better define it. Sure, latency is the time it takes for something to propagate in a system, and glass to glass latency is the time it takes for something to go from the glass of a camera to the glass of a display

Slow Motion Forwarding Frames Method

This method is the simplest and quickest way to measure a system glass to glass latency with reliable measured values. It has the great advantage that it is completely independent of the refresh rate of the timer, it only takes into account how many frames takes to get a change reflected on the system screen under measurement.

To apply it, it's necessary to have a slow-motion camera that captures at least 240 fps (most new smartphones like iPhone 7 or Samsung Galaxy S8 have a camera with 240fps slow-motion capabilities). To get the theoretical frame per frame latency of a 240fps camera, just take the reciprocal of the framerate. So, 1/240 = 4.167 ms.

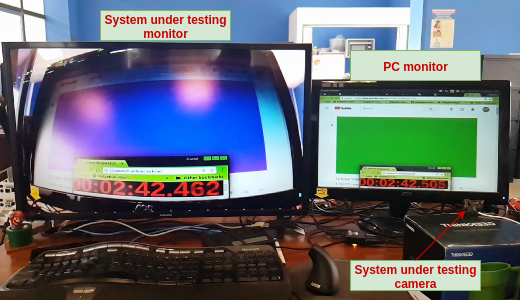

The method consists of running a "ms resolution online timer" and/or "Color Strobe Light video" on your PC, then put the camera of the system under measurement by focusing on the PC screen where the timer and/or video is displayed. Displays the captured live video of the system under measurement to a different monitor, next to the PC monitor. Then, record a slow-motion video with the smartphone camera of the two monitors.

Finally, we have to analyze the recorded video on VLC, taking advantage of the frame by frame forwarding feature. Open the video with VLC, pause it when the video is stable (around 6s from the beginning). On the video, pay special attention to the PC monitor and identify the timer value or the color frame of the strobe video. Then, forward the video frame by frame until the same timer value or the same color frame that was identified on the PC monitor is seen on the system under the measurement monitor. To take the latency measurement, just simply multiply the number of forwarded frames with the frame latency of the slow-motion camera. For example, if it takes 12 frames to see a PC monitor change to be reflected on the system monitor, and the video was recorded with a 240 fps slow-motion camera (4.167 ms), the glass to glass latency is 4.167x12 = 50 ms +/- 4ms. It is important to recall that the measured values will have an uncertainty equal to the frame per frame latency of the recorded video, so as higher the framerate of the slow-motion camera used to record the video, the less will be the uncertainty of the measurements.

The below picture illustrates the test setup, for a better understanding of it:

Below you will find a terminal command to generate a high-resolution timer on the console (recommended timer):

while true; do echo -ne "`date +%H:%M:%S:%N`\r"; done

Using the above console-based high-resolution timer, you can get a reliable glass to glass latency measurement by just taking the difference between the timer value on the PC monitor and the system under testing monitor:

Glass to glass latency = PC display timer value - System under testing display timer value

Below you will find useful links to an online ms timer and color strobe videos:

- Milliseconds resolutions timer:

- 12 Color Strobe 1Hz refresh rate video

- Color Strobe 33.3 ms refresh rate video

Sub-frame Resolution Method

This method is more complex to be applied and required more invested time, money, and resources.

If something is a random event, is it happening in all of the screens at the same time, or is restricted to a point in space? If it is happening in all of the camera’s lenses at the same time, do we consider latency the time it takes for the event to propagate in all of the receiving screen, or just a portion of it? The difference between the two might seem small, but it is actually huge.

Consider a 30 fps system, where every frame takes 33 milliseconds. Now consider the camera of that system. To put it simply, every single line of the vertical resolution (say, 720p) is read in sequence, one at a time. The first line is read and sent to the video processor. 16 milliseconds later the middle line (line 360) is read and sent to the processor. 33 milliseconds from start the same happens to the last line (line 720). What happens when an event occurs on the camera glasses at line 360 and the camera just finished reading it? That’s easy, it is going to take the camera the time of a full-frame (33 milliseconds) to even notice something changed. That information will have to be sent to the processor and down the line up to the display, but even by supposing all that to be latency-free (it is not), it takes the time of a full-frame, 33 milliseconds, to propagate a random event from a portion of the screen in a worst-case scenario.

That is what happens to analog systems, where the interlaced 60 frames per second are converted to 30 progressive and are affected by this latency. There is no such thing as zero latency. It’s just a marketing gimmick, sustained by the difficulty of actually measuring those numbers.

Measuring latency with sub-frame resolution Or, measuring the propagation time of a random event from and to a small portion of the camera/display, because the other way is wrong (it only partially takes framerate into account).

A simple way of doing it is to use an Arduino, light up a LED, and measure the time it takes for some kind of sensor to detect a difference in the display. The sensor needs to be fast, and the most oblivious choice for the job (a photoresistor) is too slow, with some manufacturers quoting as much as 100 milliseconds to detect a change in light. For this, we need to use something more sophisticated, a photodiode, maybe with an included amplifier. A Texas Instruments OPT101P was selected for the job. The diode is pretty fast in detecting light changes, try putting it below a LED table lamp and you will be able to see the LED switching on and off – something usually measured in microseconds. However, measuring the time between two slightly different lights on a screen is going to take some tweaking and you might be forced to increase the feedback loop of the integrated op-amp of something like 10M Ohms.

However, the end result is worth it: You will have a system capable of measuring FPV latency with milliseconds precision.

Article on latency measurement

- "Glass to glass video latency is now under 50 milliseconds" Update from fpv.blue. URL: https://fpv.blue/2016/07/glass-to-glass-video-latency-is-now-below-50-milliseconds/

For direct inquiries, please refer to the contact information available on our Contact page. Alternatively, you may complete and submit the form provided at the same link. We will respond to your request at our earliest opportunity.

Links to RidgeRun Resources and RidgeRun Artificial Intelligence Solutions can be found in the footer below.