MoQ DEMO

|

|

1. MoQ Demo (moq-demo)

This page documents the MoQ demo applications (publisher/player), their hexagonal architecture, and how to extend the demo with new tracks and metadata.

1.1 What this demo is

moq-demo is a set of Python applications and libraries that demonstrate:

- Publishing a single MoQ channel that contains multiple video tracks (e.g., multiple robot cameras).

- Subscribing to that channel and dynamically enabling/disabling tracks at runtime.

- Injecting SEI metadata into one encoded video stream and extracting/visualizing it on the receiver side.

- A layered design that keeps the media domain logic independent from the UI and from the specific media runtime.

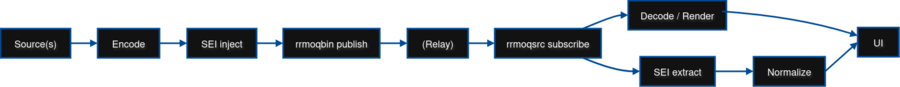

Core end-to-end data flow:

Entrypoints:

- publisher.py – Publisher application

- player.py – Player application (receiver + Qt UI)

2. Demo scenario and requirements

2.1 Robot publisher application

Capabilities:

- Configurable track count (this one is up to 4 tracks)

- SEI metadata injection for one track

- Synthetic telemetry module (periodic metadata generation)

Publisher requirements (demo readiness):

- Publishes one MoQ channel containing at least 4 video tracks

- One track includes valid SEI metadata that is viewable by the player

- Metadata updates at the configured rate without interruption

- Stable for extended demos (≥ 1 hour continuous run)

2.2 Player application

Capabilities:

- UI for channel selection and track selection

- Multi-track concurrent video display (mosaic)

- Real-time SEI metadata visualization

- Dynamic track subscription (enable/disable tracks live)

Player requirements:

- Allow channel selection

- List tracks for selected channel

- Dynamic enable/disable per track

- Display all active tracks correctly and concurrently

- Parse and display SEI metadata in UI

- No crashes when switching channels/tracks

3. System architecture overview

The implementation follows a Hexagonal Architecture:

- The domain/core defines interfaces for media control, UI control, and metadata generation.

- Concrete implementations (adapters) plug in:

- A GStreamer backend (pipelines, callbacks, rrmoq elements)

- A Qt UI server (controls + visualization)

- A console user server (interactive CLI commands)

This yields:

- testability (core logic can be exercised without a UI),

- flexibility (swap UI or backend),

- clearer separation of responsibilities.

3.1 High-level layering

| Layer | Purpose | Typical modules |

|---|---|---|

| Application (entrypoints) | Wiring, CLI args, user-level behavior | publisher.py, player.py |

| Domain / Ports | Abstract interfaces & orchestration contracts | media_server/*.py, ui_server/ui_server.py, user_server/user_server.py, meta_generator.py |

| Adapters (Implementations) | Backend/UI/CLI concrete implementations | gstreamer_backend.py, gstreamer_server.py, qt_ui_server.py, console_user_server*.py |

| Examples / Snippets | Focused runnable mini-demos | examples/snippets/* |

3.2 Media server internal model (Server / Backend / Entity / MetaGenerator)

The media domain is modeled with four primary concepts:

- MediaServer – orchestrates media entities, callbacks, and metadata generators.

- MediaBackend – executes concrete media operations (e.g., create/play/stop GStreamer pipelines).

- MediaEntity – represents one runnable media unit (e.g., a pipeline instance) plus its identity.

- MetaGenerator – periodic threaded producer of metadata (telemetry, timestamps) that gets injected/exposed through the media pipeline.

Responsibilities in practice:

- Applications request actions through the server (start/stop, attach metadata, attach callbacks).

- Server delegates runtime work to the backend.

- Backend creates/controls pipelines and binds callbacks for SEI injection/extraction.

- MetaGenerator periodically emits dictionaries/payloads; server routes them to the injection path.

4. Runtime Interaction Model (Control + Data + Metadata Planes)

This section expands the architecture overview with a sequence-style description of participants and step-by-step interactions across control, data, and metadata planes.

4.1 Participants

- User

- Qt UI

- Player Controller (inside player.py orchestration logic)

- GStreamerServer

- GStreamerBackend

- Player Pipeline (rrmoqsrc / decode / compositor / sink)

- Relay

- Publisher Pipeline

These participants cooperate across three logical planes:

- Publish path (upstream to relay)

- Playback/control path (UI → controller → server → backend)

- Data + metadata receive planes (relay → pipeline → UI)

4.2 Publish Path (Publisher → Relay)

Publisher Pipeline → Relay:

1) Capture or generate sources

* Real camera (e.g., v4l2src) or synthetic source (videotestsrc). * One branch per logical camera/track.

2) Encode (x264) + optional SEI injection

* Each branch encodes independently. * If metadata is enabled, seiinject embeds structured payload into the bitstream.

3) Push tracks via rrmoqbin

* One sink pad per track.

* Each sink pad configured with:

- Channel name

- Track name (e.g., video1, video2, …)

* rrmoqbin publishes all tracks under a single MoQ channel.

Result: Relay catalog contains one channel with multiple independently subscribable tracks.

4.3 Playback / Control Path (User → Media Runtime)

User → Qt UI:

4) User opens player and interacts with controls

* Select mode (single / mosaic) * Enable/disable tracks * Play/Pause * Resize or reposition video tiles

Qt UI → Player Controller:

5) Qt UI dispatches control commands, including:

* SetVideoTarget(handle, rect) * PlayPause * SetMode(single | mosaic) * SwitchTrack(track) * SetTileVisible(idx, visible)

The Player Controller translates UI intent into media-server-level operations.

Player Controller → GStreamerServer:

6) Based on current state:

* Build pipeline string appropriate for:

- Single mode (one track)

- Mosaic mode (multiple tracks + compositor)

* Attach/start entity (or recreate entity when switching mode/track)

GStreamerServer → GStreamerBackend:

7) Backend executes media lifecycle:

* parse_launch(pipeline_string) * set_state(PLAYING)

8) Configure runtime bindings:

* Window handle / rendering rectangle * Metadata extraction callbacks (extract / extract_N per track) * Video target mapping for compositor tiles

This completes control-plane orchestration.

4.4 Data Plane (Receiving Media)

Relay → Player Pipeline:

9) rrmoqsrc subscribes to selected channel/tracks

* Dynamic subscription depending on mode and selected tracks.

10) Single mode:

* Selected track → decode → sink

11) Mosaic mode:

* Each subscribed track → decode → compositor sink pad * Compositor → single videosink

Key property:

- Each track is independently delivered and decoded.

- Subscription changes may trigger pipeline reconfiguration or branch re-linking.

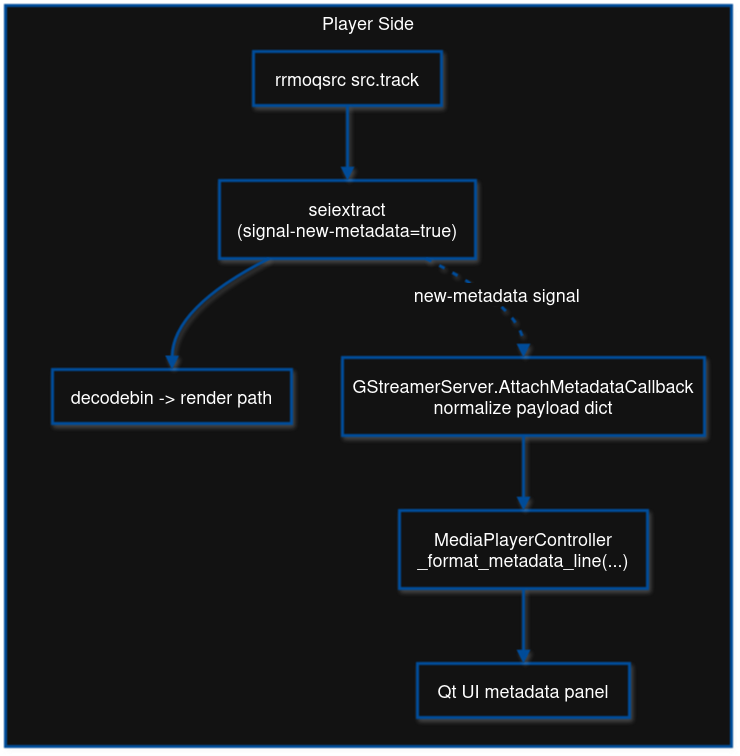

4.5 Metadata Plane (SEI Extraction Flow)

Player Pipeline (seiextract) → GStreamerServer callback:

12) seiextract emits metadata messages 13) Backend callback captures:

* element name * raw bytes * track index * decoded payload

14) GStreamerServer normalizes metadata:

* Convert bytes to structured dict/list * Tag with track index

GStreamerServer → Player Controller → Qt UI:

15) Controller formats metadata text

* Uses _format_metadata_line() in player.py * May customize per-field formatting

16) Qt UI updates metadata label/panel in real time

Metadata plane is independent of video rendering and does not block playback.

4.6 Shutdown Sequence

User/Window close → Qt UI → Player Controller:

17) Stop request issued:

* Disable UI controls * Request entity teardown

Player Controller → GStreamerServer / GStreamerBackend:

18) Backend performs cleanup:

* Detach callbacks * Set pipeline to NULL * Remove media entity * Release window handles

Application exits cleanly without leaving GStreamer resources active.

This interaction model clearly separates:

- Control plane – UI and orchestration logic.

- Data plane – Media streaming via rrmoqsrc/rrmoqbin.

- Metadata plane – SEI injection/extraction and UI updates.

The separation ensures stability, extensibility, and predictable behavior when:

- Switching modes,

- Adding/removing tracks,

- Extending metadata,

- Or running long-duration demos.

5. Component interactions

This section explains how the most important components cooperate at runtime.

5.1 MoQ publish path (publisher)

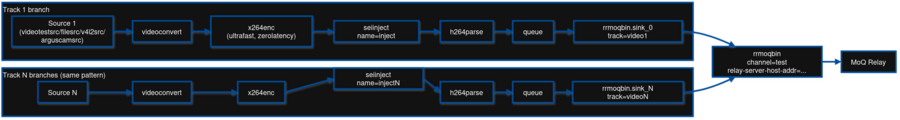

In the publisher pipeline, each camera/source becomes a branch:

- Source element (real camera or test source)

- Encoder (x264enc in the demo)

- Optional SEI metadata injection (seiinject)

- MoQ publish element (rrmoqbin), using a dedicated sink pad per track

Conceptually, this is how the publisher pipeline works:

Key idea: one rrmoqbin sink pad per track. Track naming is configured via sink pad properties (e.g., video1, video2…).

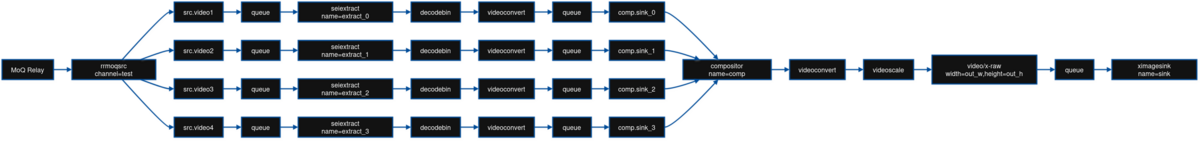

5.2 MoQ subscribe path (player)

On the player side, rrmoqsrc subscribes to the channel and requested tracks. Each subscribed track is decoded and rendered. In mosaic mode, multiple decoded video branches are composed into a single output (compositor).

Conceptually this is the player pipeline (single mode):

And this is the player pipeline (mosaic mode):

Dynamic subscription means the player can add/remove track branches at runtime (enable/disable tiles) without tearing down the entire application flow.

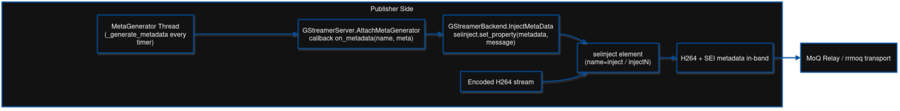

5.3 SEI metadata path (inject + extract + UI)

The demo uses GstSEIMetadata embedded in the encoded video bitstream .

Injection:

- A metadata dictionary is produced periodically (telemetry generators).

- The payload is assigned to the SEI injection element (seiinject), in this demo only attached to one track.

- The injection element writes SEI messages into the outgoing encoded frames.

Extraction:

- On the receiver side, seiextract reads SEI messages from the incoming stream.

- Extracted metadata is normalized into a Python dict/list structure.

- The UI updates a metadata panel live.

On the publisher side, the flow looks like this:

And on the player side, the flow looks like this:

6. Repository layout and file guide

6.1 Top-level

| Path | Role |

|---|---|

| .pre-commit-config.yaml | Formatting/linting hooks (pylint, isort, autopep8, hygiene hooks) |

| README.md | Setup instructions (uv, dependencies) (note: template; this page complements it) |

| publisher.py | Publisher application entrypoint: builds multi-track publish pipelines and attaches metadata generators |

| player.py | Player application entrypoint: builds subscriber pipelines, coordinates UI, supports single/mosaic and metadata display |

6.2 Media server

6.2.1 Domain/Ports

| File | Purpose (Port / Core) |

|---|---|

| media_server/media_server.py | Abstract server lifecycle and orchestration API (attach/start/stop entities, attach callbacks, attach meta generators) |

| media_server/media_backend.py | Abstract backend interface for pipeline operations (create/play/stop/delete, callback wiring) |

| media_server/media_entity.py | Abstract media entity contract (name, pipeline identity, start/stop) |

| media_server/meta_generator.py | Base threaded periodic metadata producer; used by time/telemetry generators |

| media_server/main.py | Standalone harness to test media server + metadata generator behavior |

6.2.2 GStreamer adapters

| File | Purpose (Adapter / Implementation) |

|---|---|

| media_server/media_backends/gstreamer_backend/gstreamer_backend.py | Concrete backend: creates and manages GStreamer pipelines, binds injection/extraction callbacks, sends actions into pipelines |

| media_server/media_entities/gstreamer_entities/gstreamer_entity.py | Concrete entity: wraps a GStreamer pipeline instance as a start/stop capable MediaEntity |

| media_server/media_servers/gstreamer_server/gstreamer_server.py | Concrete server/orchestrator: builds publisher/player pipelines, catalog fetching, mode switching (single/mosaic), tile visibility, play/pause toggles |

6.2.3 Metadata generators

| File | Purpose |

|---|---|

| media_server/meta_generators/time_meta_generator.py | Generates timestamp-only metadata at a configured rate |

| media_server/meta_generators/telemetry_meta_generator.py | Generates mock telemetry payloads (dict) at a configured rate (extend this for new fields) |

6.3 UI server (port + Qt adapter)

| File | Purpose |

|---|---|

| ui_server/ui_server.py | Abstract UI server interface (port) used by the player/controller |

| ui_server/ui_servers/qt_ui_server/qt_ui_server.py | Qt implementation: window, controls, track list, metadata panel, dispatch bridge to controller |

| ui_server/tests/* | Qt tests: offscreen setup + unit/UI/click tests to validate UI behavior |

Test notes:

- ui_server/tests/conftest.py sets up offscreen Qt to enable headless test runs.

- test_ui_server_unit.py validates constructor/dispatch behavior.

- test_ui_server_ui.py and test_ui_server_ui_clicks.py validate UI interactions.

6.4 User server (CLI port + console adapters)

| File | Purpose |

|---|---|

| user_server/user_server.py | Abstract user-server interface (port) for runtime property changes |

| user_server/user_servers/console_text.py | Help text/constants for console UX |

| user_server/user_servers/console_user_server.py | Manual parser console (set/pattern/quit) |

| user_server/user_servers/console_user_server_click.py | Click-based improved console parser |

6.5 Examples/snippets

| Path | Purpose |

|---|---|

| examples/snippets/multiple-tracks-single-channel/* | CLI sender/receiver demos for multiple tracks in a single channel (often assumes 4 tracks) |

| examples/snippets/interface_receiver/* | Sender + Qt receiver prototype (UI receiver demo) |

| examples/snippets/sei/* | Minimal SEI metadata inject/extract demos (useful for validating metadata path) |

7. How to build and run

8. Requirements

- Python >= 3.10

- uv for environment management

- GStreamer runtime and plugins

- PyGObject bindings for the GStreamer examples

9. Python environment setup (uv)

Create environment and install base deps:

uv venv uv sync

Install optional extras:

- UI server:

uv sync --extra ui

- Examples (PyGObject + PyQt):

uv sync --extra examples

- Everything:

uv sync --all-extras

10. System dependencies (GStreamer + PyGObject)

10.1 Ubuntu/Debian

sudo apt-get update sudo apt-get install -y \ python3-gi \ gstreamer1.0-tools \ gstreamer1.0-plugins-base \ gstreamer1.0-plugins-good \ gstreamer1.0-plugins-bad \ gstreamer1.0-plugins-ugly \ gir1.2-gst-plugins-base-1.0

10.2 Fedora

sudo dnf install -y \ python3-gobject \ gstreamer1 \ gstreamer1-plugins-base \ gstreamer1-plugins-good \ gstreamer1-plugins-bad-free \ gstreamer1-plugins-ugly-free

10.3 macOS (Homebrew)

brew install \ gstreamer \ gst-plugins-base \ gst-plugins-good \ gst-plugins-bad \ gst-plugins-ugly \ pygobject3

11. Running the main apps

11.1 UI server demo (standalone Qt)

uv sync --extra ui uv run python ui-server/test_ui_server.py

11.2 Publisher (publisher)

python3 publisher.py

11.3 Player (receiver + UI)

python3 player.py

12. Running snippets

12.1 Interface receiver (Qt + GStreamer)

uv sync --extra examples uv run python examples/snippets/interface_receiver/interfqt.py --help

12.2 Single-channel sender (GStreamer)

uv sync --extra examples uv run python examples/snippets/interface_receiver/single_channel_sender.py --help

12.3 Multiple tracks receiver

uv sync --extra examples uv run python examples/snippets/multiple-tracks-single-channel/single_channel_receiver.py --help

13. Example pipelines

These examples are the constructed pipelines in publisher.py/player.py and in the GStreamer server builder.

13.1 Multi-track publisher

Publisher (4 videotestsrc -> 4 tracks):

gst-launch-1.0 -e \

rrmoqbin relay-mode=local relay-server-host-addr="https://127.0.0.1:4443" name=moq channel="test" \

sink_0::track-name="video1" sink_0::channel="test" \

sink_1::track-name="video2" sink_1::channel="test" \

sink_2::track-name="video3" sink_2::channel="test" \

sink_3::track-name="video4" sink_3::channel="test" \

videotestsrc is-live=true pattern=smpte name=src1 ! videoconvert ! x264enc speed-preset=ultrafast tune=zerolatency ! seiinject name=inject ! h264parse ! queue ! moq.sink_0 \

videotestsrc is-live=true pattern=ball name=src2 ! videoconvert ! x264enc speed-preset=ultrafast tune=zerolatency ! seiinject name=inject2 ! h264parse ! queue ! moq.sink_1 \

videotestsrc is-live=true pattern=snow name=src3 ! videoconvert ! x264enc speed-preset=ultrafast tune=zerolatency ! seiinject name=inject3 ! h264parse ! queue ! moq.sink_2 \

videotestsrc is-live=true pattern=black name=src4 ! videoconvert ! x264enc speed-preset=ultrafast tune=zerolatency ! seiinject name=inject4 ! h264parse ! queue ! moq.sink_3

13.2 Player on single mode (video 1)

gst-launch-1.0 -e \ rrmoqsrc relay-url="https://127.0.0.1:4443" channel="test" name=src \ src.video1 ! queue ! seiextract signal-new-metadata=true name=extract ! decodebin ! videoconvert ! videoscale ! video/x-raw,width=1000,height=600,pixel-aspect-ratio=1/1 ! queue ! ximagesink name=sink sync=false force-aspect-ratio=false

13.3 Player on mosaic mode

gst-launch-1.0 -e \

rrmoqsrc relay-url="https://127.0.0.1:4443" channel="test" name=src \

compositor name=comp background=black ignore-inactive-pads=true \

sink_0::xpos=0 sink_0::ypos=0 sink_0::width=500 sink_0::height=300 sink_0::sizing-policy=keep-aspect-ratio \

sink_1::xpos=500 sink_1::ypos=0 sink_1::width=500 sink_1::height=300 sink_1::sizing-policy=keep-aspect-ratio \

sink_2::xpos=0 sink_2::ypos=300 sink_2::width=500 sink_2::height=300 sink_2::sizing-policy=keep-aspect-ratio \

sink_3::xpos=500 sink_3::ypos=300 sink_3::width=500 sink_3::height=300 sink_3::sizing-policy=keep-aspect-ratio \

! videoconvert ! videoscale ! video/x-raw,width=1000,height=600,pixel-aspect-ratio=1/1 ! queue ! ximagesink name=sink sync=false force-aspect-ratio=false \

src.video1 ! queue ! seiextract signal-new-metadata=true name=extract_0 ! decodebin ! videoconvert ! queue ! comp.sink_0 \

src.video2 ! queue ! seiextract signal-new-metadata=true name=extract_1 ! decodebin ! videoconvert ! queue ! comp.sink_1 \

src.video3 ! queue ! seiextract signal-new-metadata=true name=extract_2 ! decodebin ! videoconvert ! queue ! comp.sink_2 \

src.video4 ! queue ! seiextract signal-new-metadata=true name=extract_3 ! decodebin ! videoconvert ! queue ! comp.sink_3

14. Extending the demo

15. Adding new tracks

15.1 Publisher side

Edit publisher.py

1) Increase the max source/track limit in main() (currently 1–4 in the demo configuration). 2) Add a new default slot in the defaults structure (so the UI/CLI has a stable mapping). 3) Ensure the rrmoq sink pad is configured with a unique track name:

sink_{i}::track-name=video{i+1}

4) Ensure the branch is linked into the correct rrmoqbin sink pad:

... ! moq.sink_{i}

Result: new tracks appear as video5, video6, etc.

15.2 Player side

If using explicit tracks:

--tracks video1,video2,video3,video4,video5

If tracks are not provided, the player discovers tracks automatically via catalog fetching (FetchCatalogTracks()).

15.3 UI and snippet compatibility

- The Qt UI is already dynamic with track count.

- Some snippet apps assume exactly 4 tracks and must be updated if you exceed 4:

- examples/snippets/interface_receiver/interfqt.py

- examples/snippets/multiple-tracks-single-channel/single_channel_sender.py

- examples/snippets/multiple-tracks-single-channel/single_channel_receiver.py

16. Adding new metadata fields

16.1 Step 1 – Extend telemetry generator output

Edit:

- media_server/meta_generators/telemetry_meta_generator.py

Add keys in the generated dict payload, for example:

{

"battery": 87,

"gps": {"lat": 10.1, "lon": -84.2},

"state": "OK"

}

16.2 Step 2 – Ensure injection path is attached

publisher.py attaches the generator with:

server.AttachMetaGenerator(entity, mg, "inject")

The backend maps the generator payload into the SEI injection element’s metadata property.

16.3 Step 3 – Update receiver formatting (optional)

The player already pretty-prints dict/list payloads in:

- player.py → _format_metadata_line()

If you want custom formatting per field (e.g., battery gauge, GPS formatting), update that function and/or the Qt UI renderer in:

- ui_server/ui_servers/qt_ui_server/qt_ui_server.py

17. Quick validation checklist

After adding tracks or metadata fields:

- Start relay + publisher with your new configuration.

- Run player in mosaic mode.

- Confirm the new track appears and can be enabled/disabled.

- Confirm the metadata panel updates and includes the new fields.

- Switch channels/tracks repeatedly to validate stability (no crashes).

- Run for ≥ 1 hour to validate continuous demo stability.