System Architecture

|

|

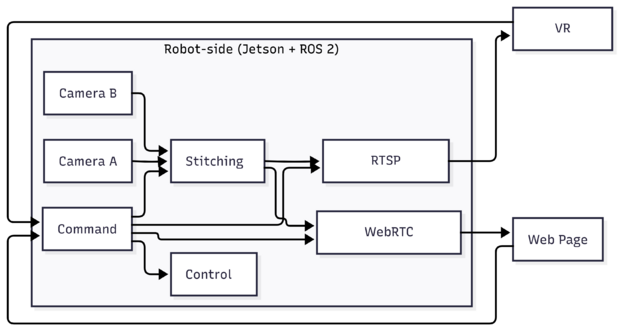

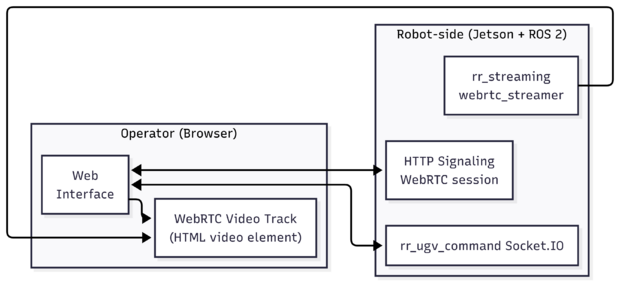

RidgeRun Immersive Teleoperation is implemented as a distributed system composed of a robot-side runtime and operator-facing web and VR control interfaces, connected through dedicated video and control paths. On the robot, ROS 2 is used as the internal communication layer to acquire camera data, stream video, and execute control commands.

Camera frames are published by ROS 2 camera nodes and consumed by a streaming service, which delivers real-time video to the operator using WebRTC. This video path is optimized for low latency and supports multiple camera sources.

Operator inputs are handled independently through a command server that enforces control authority and monitors connection health using heartbeat mechanisms. Approved commands are translated into ROS 2 messages and forwarded to the robot driver, which interfaces directly with the robot hardware.

The following diagram provides a high-level overview of the system architecture and the interaction between its main components.

Robot

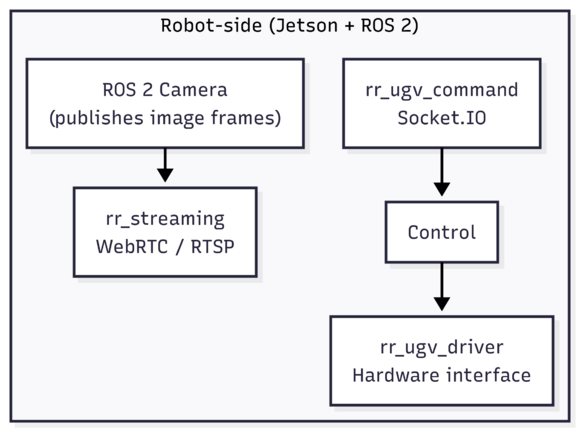

The robot-side runtime runs on an NVIDIA Jetson platform and uses ROS 2 to coordinate camera capture, video streaming, and hardware control. A ROS 2 camera node publishes live image frames, which are consumed by rr_streaming to generate the video feed delivered to the operator.

In parallel, rr_ugv_command receives operator control requests, enforces control authority and connection health through heartbeat mechanisms, and publishes the corresponding ROS 2 command messages. These commands are consumed by rr_ugv_driver, which bridges ROS 2 control messages to the robot hardware through its hardware interface, enabling actions such as robot motion and camera pan/tilt control.

360 video

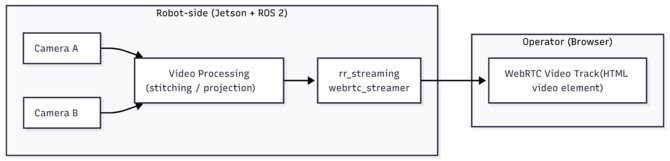

For immersive VR teleoperation, the system supports a 360-degree video pipeline that combines video from two onboard cameras into a single panoramic view suitable for VR rendering. The camera streams are captured on the robot and published as ROS 2 image topics, which are consumed by a dedicated video processing component that merges them into a single 360-degree video stream.

The video processing stage also supports selecting the active video source to be streamed. Operators can switch between a front camera view, a back camera view, or the generated 360-degree panoramic view. The selected output is published as a ROS 2 image topic and provided as input to the streaming service.

From this point onward, the selected video stream follows the same low-latency streaming path as standard camera feeds and is delivered to the VR application running on the Meta Quest 2 headset, without requiring changes to the underlying streaming infrastructure.

Video Streaming

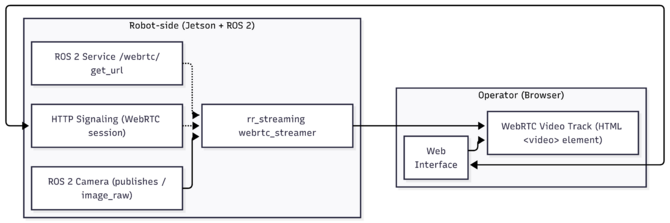

Video streaming on the robot is provided by the rr_streaming package. In the robot-side runtime, ROS 2 camera nodes publish image frames as sensor_msgs/Image, which are consumed by the streaming component to generate real-time video streams for remote operators.

The streaming component supports multiple streaming protocols depending on the client type. For browser-based operators, the WebRTC streamer (webrtc_streamer) generates a low-latency WebRTC video stream that can be consumed directly by a standard web browser. This stream is used by the Web Interface to display live video from the robot without requiring custom plugins or client-side video processing.

For immersive VR operation, the same video source is also exposed through an RTSP stream, allowing RTSP-compatible clients—such as the VR application running on the Meta Quest 2 headset—to receive the robot video feed. This separation allows each client to use the streaming protocol best suited to its requirements while sharing the same robot-side video pipeline.

Web Interface

The Web Interface is a browser-based application that allows an operator to remotely view live video and control the robot. It provides controls for robot movement, camera gimbal actuation, and camera selection, enabling the operator to switch between available video views while monitoring the robot in real time.

Live video is received through a WebRTC connection established with the streaming service and rendered directly in the browser using a standard HTML video element. This allows low-latency video display without requiring custom plugins or client-side video processing.

Control commands generated by user interactions in the Web Interface are sent separately to the command server using Socket.IO. The command server enforces control authority and monitors connection health before forwarding approved commands to the robot-side runtime.

VR Headset application

The VR Interface is implemented using a Meta Quest 2 headset and provides an immersive teleoperation experience based on a 360-degree video stream. The headset consumes the panoramic video delivered by the robot through an RTSP stream, allowing the operator to observe the robot’s surroundings by naturally looking around, without requiring camera gimbal control.

Robot movement commands are generated using the Meta Quest controller joystick and transmitted to the command server through the same control path used by other operator interfaces. Control authority and connection health are enforced by the command server before approved commands are forwarded to the robot-side runtime.

By combining head-tracked 360-degree video with direct motion control, the VR interface enables an in-person–like teleoperation experience.